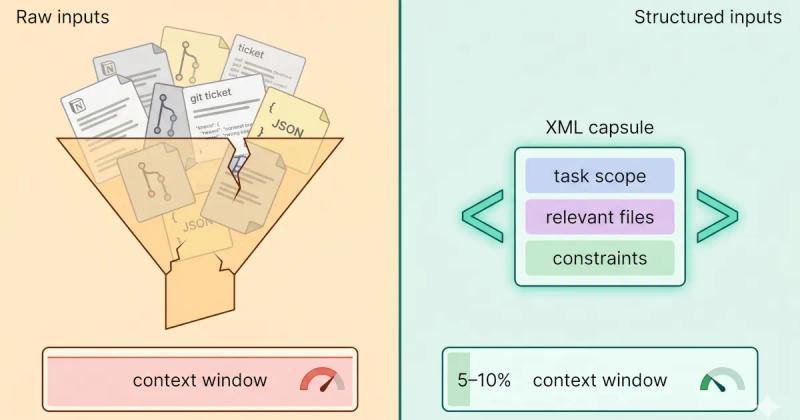

Context Engineering vs Prompt Engineering: What the Shift Means for Developers (2026)

Stop rewriting prompts. Learn when to use context engineering vs prompt engineering to optimize LLM context quality without complex RAG pipelines.

Read more about Context Engineering vs Prompt Engineering: What the Shift Means for Developers (2026)

Context Rot Is Real: What Chroma's 18-Model Study Found

Chroma tested 18 frontier LLMs and found every one degrades as input length grows. Here is what their context rot study proves developers must change.

Read more about Context Rot Is Real: What Chroma's 18-Model Study Found

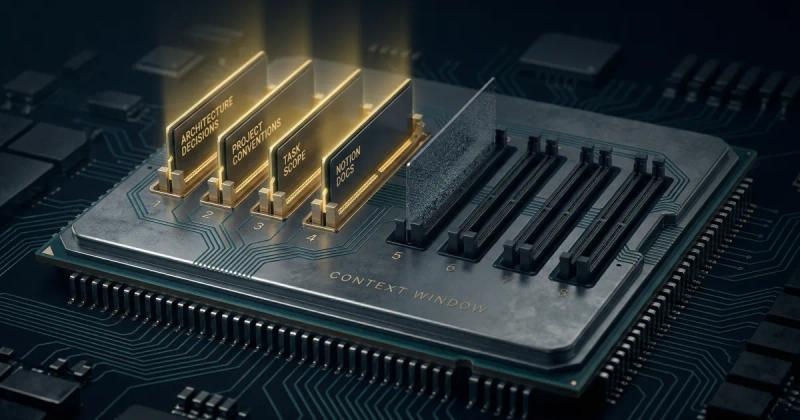

Why Your AI Prompts Keep Hitting LLM Context Window Limits and How to Right-Size Them.

Hitting token limits mid-task is frustrating, costly, and avoidable. Here is why it happens across ChatGPT, Claude, and Gemini and a practical framework for right-sizing your context every time.

Read more about Why Your AI Prompts Keep Hitting LLM Context Window Limits and How to Right-Size Them.

Claude Code Context Window Rot: Why Sessions Get Dumber (And How to Fix It)

Claude Code sessions degrade silently - not from bugs, but from context rot. Here's the science, the symptoms to spot early, and the fix that works upstream.

Read more about Claude Code Context Window Rot: Why Sessions Get Dumber (And How to Fix It)