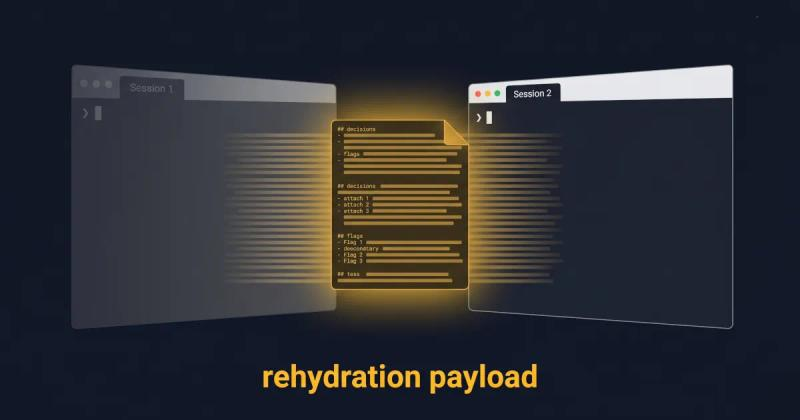

Why Long-Running AI Agents Forget: A Session Context Strategy

Long-running AI agents lose context over time. The fix isn't bigger context windows. It's a curated handoff between coding sessions. Here's the protocol.

Read more about Why Long-Running AI Agents Forget: A Session Context Strategy

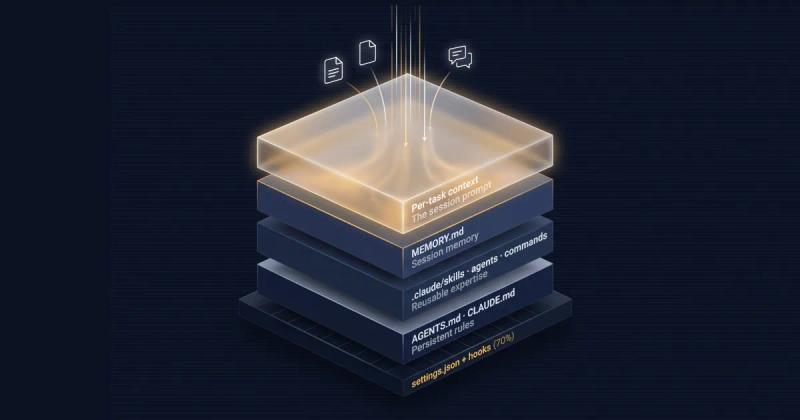

CLAUDE.md vs AGENTS.md: The 4-Layer AI Coding Memory Stack

Resolve the claude.md vs agents.md overlap. Separate your repo rules, session memory, skills, and per-task context into a clean 4-layer stack.

Read more about CLAUDE.md vs AGENTS.md: The 4-Layer AI Coding Memory Stack

Anthropic Context Engineering Guide 2026: A Field Manual

Read the Anthropic context engineering guide 2026 but stuck on implementation? Translate its four pillars into a concrete checklist for your next LLM session.

Read more about Anthropic Context Engineering Guide 2026: A Field Manual

Context Rot Is Real: What Chroma's 18-Model Study Found

Chroma tested 18 frontier LLMs and found every one degrades as input length grows. Here is what their context rot study proves developers must change.

Read more about Context Rot Is Real: What Chroma's 18-Model Study Found