Ben •

Ben •

You are mid-task. You have assembled your requirements doc, your reference code, and your system instructions, and you paste everything into your LLM of choice. Then it happens: a truncated response, a confused answer that ignores half your input, or an outright error telling you the input is too long.

If you have hit a token limit wall, you are not alone. It is one of the most common frustrations among developers, product managers, and founders who use LLMs for real work. But it is also one of the most misunderstood. Most people treat it as an annoying hard stop. In reality, it is a signal that your context strategy needs a rethink.

This post explains why token limits exist, what actually happens when you exceed them, why bigger context windows are not always the answer, and how to right-size your prompts so you get better results at lower cost.

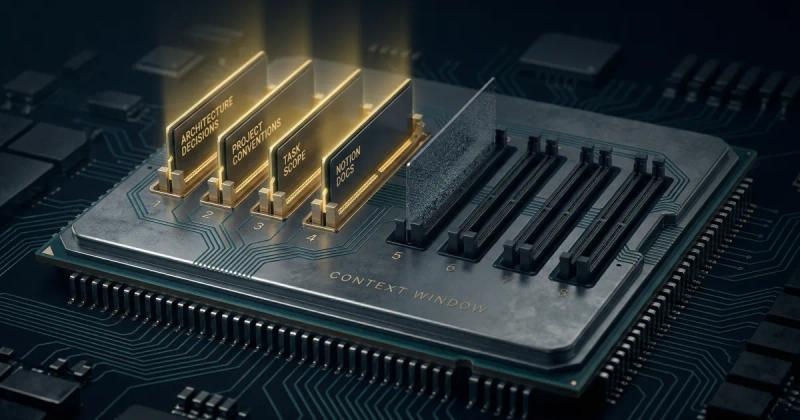

Every LLM has a context window: the maximum amount of text it can hold in its working memory at once. This includes everything, your system prompt, the conversation history, the files you have attached, and the response the model is generating. It all competes for the same finite space, measured in tokens.

A token is roughly 3 to 4 characters of text, or about 0.75 of an average English word. A 1,000-word document is approximately 1,300 to 1,400 tokens. A 300-line Python file can easily be 2,000 to 4,000 tokens depending on complexity.

The limits vary significantly by model and plan. Here are the context windows for the major models as of early 2026 (check the official docs for the most current figures, as these change frequently):

These numbers look generous. A million tokens is roughly 750,000 words. So why do teams still run into problems?

The most obvious failure mode is the hard stop. You paste a large codebase, a lengthy Notion document, or a long conversation history and the model either refuses the input or silently drops the parts that do not fit. The response you get back ignores half of what you gave it.

What makes this worse is that models do not always tell you when they have dropped context. They generate a confident-sounding answer based on an incomplete picture of what you actually provided. You get results that look reasonable but are missing crucial constraints or requirements buried in the parts that got cut.

Here is the insight that most guides on token limits miss entirely: a bigger context window does not guarantee better output. Research from Stanford and Berkeley identified a phenomenon called "Lost in the Middle": LLMs are significantly better at recalling information placed at the beginning and end of a prompt than information buried in the middle.

In practical terms, if you dump 80,000 tokens of context into a model, the key requirement you buried at token 40,000 may be effectively ignored, even though it technically fits within the window. The model processes it, but does not weight it appropriately.

This means the goal is not to fill the context window. It is to fill it with the right things, in the right order, at the right size.

For anyone using LLMs via the API, token count is a direct billing line. OpenAI charges per input token and per output token. Anthropic applies a 2x premium on API inputs that exceed 200,000 tokens. The more bloated your context, the more every single request costs.

Teams that build AI workflows without token discipline find their API costs growing faster than their usage. A prompt padded with redundant comments, duplicate context, or irrelevant file contents can cost two to three times as much as a well-trimmed equivalent that produces the same or better output.

You might expect that as context windows grow from 128k to 1 million tokens, the problem simply disappears. It does not, for two reasons.

First, the amount of context people want to include is growing just as fast as the windows. Developers are feeding entire codebases. PMs are including full product databases. Founders are assembling company knowledge bases. The appetite for context expands to fill whatever space is available.

Second, assembling context manually does not scale. Without a systematic approach, every session starts from scratch. The same files get re-pasted. The same instructions get retyped. There is no token visibility before you send, which means you discover the problem only after it has already affected your output or your API bill.

The following approach works regardless of which LLM you use. It applies whether you are a developer building an AI workflow, a PM assembling requirements for a task, or a founder querying your company knowledge base.

Before assembling a prompt, establish your token target. A useful rule of thumb is to aim for 60 to 70% of the model's context window for your input, leaving room for a substantive output. If you are using GPT-4o with its 128k window, target roughly 80,000 tokens of input and reserve the rest for the response.

Knowing your budget before you start assembling prevents the common pattern of building context blind, hitting the limit, and then scrambling to cut things that may matter.

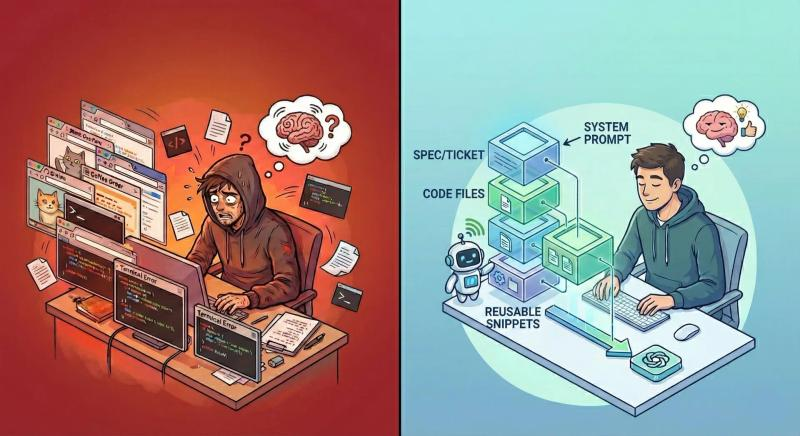

The most common mistake in context assembly is including everything that might be relevant rather than only what is definitely relevant. Ask for each item you are considering adding: will the model produce a meaningfully different output if this is present versus absent? If the answer is unclear, leave it out.

For code tasks, this means selecting specific files rather than entire directories. For document tasks, this means extracting the relevant sections rather than pasting the whole document. For recurring workflows, this means defining a repeatable set of sources rather than rebuilding from scratch each time.

Given the "Lost in the Middle" problem, the order of your context matters as much as its content. A reliable structure that works well across models is:

Placing your task at the end is particularly important. Models generate responses by predicting forward from the context, so a clear, specific task statement at the end of the prompt produces more focused output than one buried in the middle of a large context block.

Code files are often 30 to 50% larger in token terms than they need to be for a given task. Before including a file in your context, consider removing:

For documents, summarize sections that provide background rather than pasting them in full. The model does not need to read the entire history of a project to help you with today's task.

Most developers discover token issues reactively, after a request fails or returns a degraded response. The far more efficient approach is to know your token count before you send, so you can make deliberate decisions about what to include or trim.

This is where tooling makes a significant difference. Manually estimating token counts is imprecise and error-prone. A model-aware token counter that reflects the specific limits of the model you are targeting, such as the real-time counter in HiveTrail Mesh, shows you exactly where you stand as you build your context stack, not after you have already hit the wall.

There is one more token problem that gets less attention than it deserves: stale context. When you paste a file into an LLM chat, you are pasting a snapshot of that file at that moment. If the file changes between sessions, the version in your prompt is outdated. If you do not notice, you are asking the model to reason about code or documentation that no longer reflects reality.

The same issue applies to conversational context. Long chat sessions accumulate token-heavy history, much of which is no longer relevant to the current task. Every follow-up message in the same session sends the full conversation history to the model, making each turn progressively more expensive and more likely to surface irrelevant earlier context.

The practical solution is a just-in-time approach to context assembly: read files at the moment of sending rather than relying on previously cached content, and start fresh sessions for distinct tasks rather than carrying forward stale conversational baggage.

Use this before sending any high-stakes or API-billed prompt:

The instinct when hitting a token limit is to look for a model with a bigger window. Sometimes that is the right answer. But more often, the better answer is to be more deliberate about what you are actually putting in the window.

A lean, well-ordered 20,000-token prompt targeting the right files from your codebase will consistently outperform a bloated 100,000-token prompt that includes everything you thought might matter. It will also cost less, run faster, and produce responses that are more focused and actionable.

The teams getting the most out of LLMs in 2026 are not the ones with the biggest context windows. They are the ones who have built a repeatable, disciplined process for assembling the right context, at the right size, every time.

See how HiveTrail Mesh gives you real-time, model-aware token counts before you send: explore HiveTrail Mesh

OpenAI Model Docs (GPT-4o, GPT-4.1 context limits)

Claude Context Window Docs (Anthropic)

Claude Context Window (Support)

Gemini API Models and Limits (Google)

Lost in the Middle: How Language Models Use Long Contexts (Stanford/Berkeley)

Stop Chasing Million-Token Context Windows (Medium)

On this page

Different models handle it differently. Some return a hard error telling you the input is too long. Others silently truncate your content, processing only what fits and ignoring the rest, without telling you what was dropped. This second scenario is the more dangerous one, because you get a confident-sounding response based on an incomplete picture of what you actually provided. The safest approach is to know your token count before you send, not after.

Not automatically. Research from Stanford and Berkeley identified what they call the "Lost in the Middle" problem: LLMs recall information at the beginning and end of a prompt much more reliably than content buried in the middle. A bloated 100,000-token prompt with loosely relevant context will often produce worse output than a lean, well-ordered 20,000-token prompt with only the essentials. Right-sizing your LLM context window is a quality decision as much as a technical one.

Start by auditing what you are actually including. For code files, remove verbose comments, dead code, and unused imports. These can account for 30 to 50% of a file's token footprint without adding value for the model. For documents, replace full background sections with targeted summaries. For recurring workflows, define a fixed set of high-priority sources rather than rebuilding from scratch each session. The goal is not to minimize context, it is to eliminate the tokens that do not earn their place.

A rough but reliable rule of thumb: one token equals approximately 3 to 4 characters of English text, or about 0.75 of a word. A 300 line Python file typically runs between 2,000 and 4,000 tokens depending on complexity and comment density. A 1,000 word Notion document is roughly 1,300 to 1,400 tokens. A full meeting transcript or detailed PRD can easily reach 5,000 to 10,000 tokens. Once you start combining multiple sources, the total adds up faster than most people expect, which is why model-aware token counting before you send matters.

Yes. HiveTrail Mesh includes a model-aware token counter that shows your real-time context window usage as you build your prompt stack, mapped against the specific limit of the model you are targeting, whether that is GPT-4o at 128,000 tokens, Claude at 200,000, or Gemini at 1,000,000. You see exactly where you stand before you send, not after you hit the wall. You can explore how it works at hivetrail.com/mesh.

Stop rewriting prompts. Learn when to use context engineering vs prompt engineering to optimize LLM context quality without complex RAG pipelines.

Read more about Context Engineering vs Prompt Engineering: What the Shift Means for Developers (2026)

Master the vocabulary of AI agents with this context engineering glossary. Discover 22 key terms, from context rot to attention budgets, to build better apps.

Read more about The Context Engineering Glossary: 22 Terms for AI Developers

Stop copy-pasting code into LLMs. Learn how to build surgical, token-optimized context from your codebase, specs, and prompt library — without leaking secrets.

Read more about LLM Context Window Optimization for Developers: Stop Dumping Code, Start Sending Signal.