Ben •

Ben •

You've been here before.

You're asking Claude or ChatGPT to help with something you've explained before - your project's architecture, your team's conventions, the fact that you use tRPC and not REST for internal APIs. The model gives you a technically correct answer that's completely wrong for your codebase.

You tweak the prompt. You add more detail. You try again. The result is marginally better. You spend ten minutes rewriting the request when the real problem was never the request at all.

This is the moment where prompt engineering reaches its limit - and where context engineering begins. When evaluating context engineering vs prompt engineering, the real issue isn't how you phrased the request. It's the information environment the model was working with when it answered.

Almost every article written on this topic frames it as a massive enterprise infrastructure problem requiring specialized platform teams. That framing is accurate for certain use cases. But it leaves out the 95% of developers and product managers who aren't building multi-agent platforms. They're just trying to get reliable, high-quality output from AI tools they use every single day.

This article is written for them.

Prompt engineering is the practice of crafting the input you send to a language model to get a better response. It encompasses techniques like role assignment ("you are a senior TypeScript engineer"), chain-of-thought reasoning ("think step by step"), few-shot examples, output format constraints, and negative prompting ("do not use Redux").

It's real, it works, and it's worth learning. For bounded, well-defined tasks where the model already has everything it needs to do the job, prompt engineering can make a significant difference:

In these cases, the model has the domain knowledge, the task is self-contained, and the challenge is communication - getting the model to understand exactly what you want. That's where prompt engineering shines.

The problem is that this represents a surprisingly small fraction of what developers and PMs actually use AI for day-to-day.

Here's what breaks prompt engineering: when the model needs to know things it doesn't have access to.

Not things it doesn't know globally - models like Claude and GPT-4o are extraordinarily knowledgeable. But things it doesn't know about your specific situation: your codebase, your architectural decisions, your Notion docs, your team conventions, the business context behind this sprint.

Sound familiar? These are the exact failure modes developers hit constantly:

The Architecture Amnesia problem. You ask Claude to help refactor a module. It produces clean code using patterns your team deliberately moved away from six months ago. Nothing in your prompt told it you'd made that decision - because you shouldn't have to explain your entire tech history in every message.

The Convention Gap. You ask for a new component. It uses Styled Components. Your project uses Tailwind. You said nothing about this because you assumed it was obvious. It isn't. The model doesn't know what it can't see.

The Session Reset. In session 1, you built up a shared understanding with the model - you explained your domain, your data model, and your naming conventions. In session 2, all of that is gone. The model is a blank slate. You start over.

The Prompt Spiral. You spend more time crafting and refining the prompt than you would have spent just doing the task yourself. You've accidentally made AI slower than not using AI.

These aren't failures of instruction quality. They're failures of information availability. As Thoughtworks engineer Bharani Subramaniam put it : "Context engineering is curating what the model sees so that you get a better result."

No amount of prompt refinement fixes a model that simply never saw the relevant information.

The shift from prompt engineering to context engineering is a shift in what you're optimizing.

Prompt engineering optimizes the instruction. Context engineering optimizes the information environment - what the model knows, remembers, has access to, and what it doesn't need to waste tokens on.

Andrej Karpathy, former co-founder of OpenAI, put it precisely when he posted on X in June 2025 :

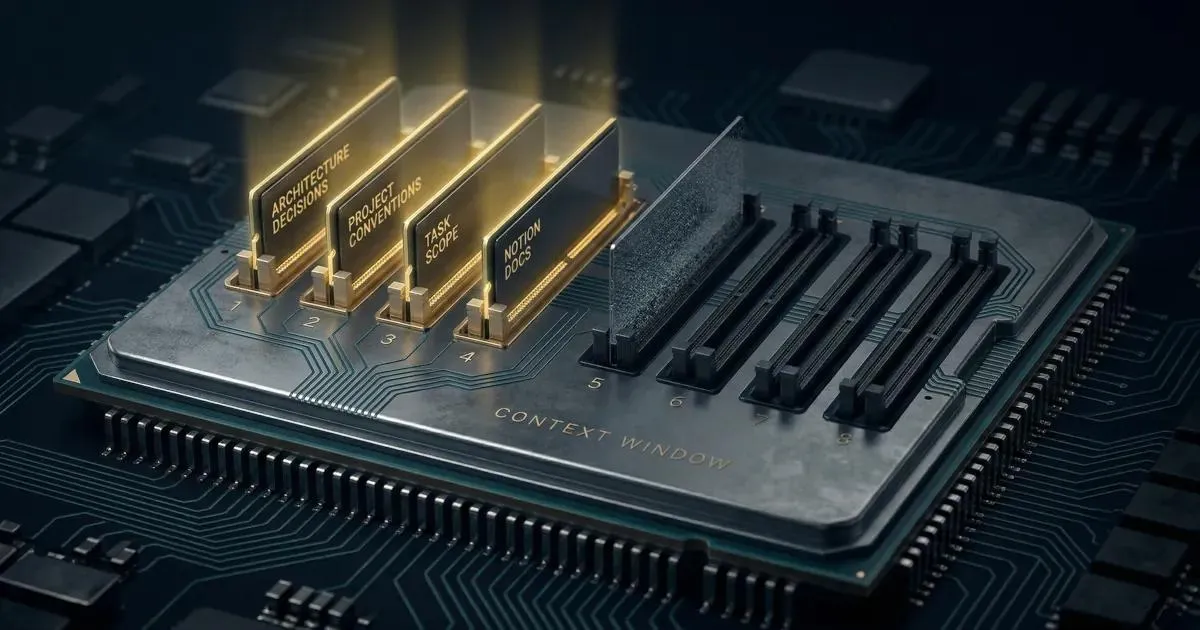

"Context engineering is the delicate art and science of filling the context window with just the right information for the next step."

His framing matters: it's both an art and a science. Science, because there are principles - right information, right structure, right size. Art, because judgment is always involved in deciding what belongs and what doesn't.

If you need a full definition before continuing, our context engineering guide for developers covers the foundations.

For an individual developer or PM, context engineering boils down to four practical levers:

This is your project conventions, architectural decisions, domain knowledge, business rules - everything a well-onboarded teammate would know on day three that you'd never want to explain again.

A practical example: developer Thomas Landgraf documented his approach to creating deep technical knowledge documents for Claude Code. Working on a complex IoT platform (Eclipse Ditto), he created a structured ditto-advanced-knowledge.md covering specialized policies, API patterns, and edge cases that the model's general training didn't cover. His reported outcome: "Features that previously took days of trial-and-error now ship in hours. The AI suggests optimizations I wouldn't have thought of." The model didn't get smarter - it got better information.

Stateless models can't retain anything across conversations. Context engineering gives them a memory layer - not through technical magic, but through deliberately maintained files that get loaded at the start of each session. This is where LLM context quality starts to compound: consistent context in means consistent quality out.

The most direct implementation for Claude Code users is a CLAUDE.md file: a structured document at the root of your project that the model reads automatically. Claude Directory's guide captures the progression developers experience when they invest in this properly:

"Week 1: You write a basic CLAUDE.md and start structuring your prompts better. Claude's output improves noticeably. Month 1: Your CLAUDE.md is refined from real sessions. Claude feels like it knows your project. Month 3: Claude produces code that passes your review on the first try most of the time."

The same principle applies beyond Claude Code - any tool that accepts a system prompt or persistent instructions benefits from this approach.

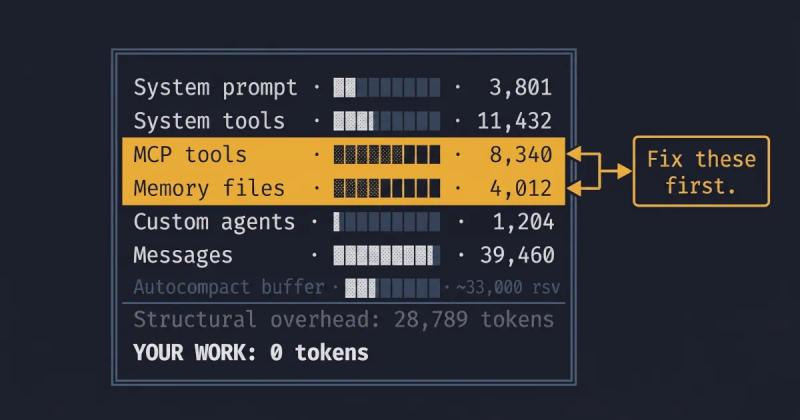

More context is not better context. This is one of the most common misunderstandings developers have when they first encounter this idea.

Research has consistently shown that model performance degrades as context gets noisier. A Chroma Research study published in July 2025 , testing 18 LLMs including Claude 4, GPT-4.1, and Gemini 2.5, found that "models do not use their context uniformly; performance grows increasingly unreliable as input length grows" - even on simple retrieval tasks. This failure mode has been called "context rot": the degradation that happens when the context window is filled with irrelevant or poorly structured material.

The practical implication: a well-structured 800-token context block will outperform a dumped 8,000-token blob of documentation. Structured context for LLMs isn't just tidier - it's measurably more effective. Token optimization for LLMs means being deliberate about every element in the context window: structure matters, format matters, and what you leave out matters as much as what you include.

As LiquidMetal AI put it in a concrete example : a developer working on a financial dashboard with compliance requirements didn't need to include their entire 15,000-token regulatory document. They extracted the single relevant constraint - "Dashboard must maintain full data accessibility for SEC compliance - no lazy loading permitted" - and injected that. The model understood the business context, the technical constraint, and produced a compliant implementation. Right information, minimal tokens.

Context engineering is as much about exclusion as inclusion. This is especially relevant for developers working with sensitive codebases - pasting entire files into a chat interface can expose API keys, internal credentials, customer data, or proprietary business logic that should never leave your local environment.

Filtering out your .env variables or proprietary business logic before pasting a snippet protects your data and instantly improves LLM context quality. A proper context engineering practice always includes a step for what not to include: credentials, PII, internal configurations, and irrelevant noise. Protecting your data and improving output quality are the same action.

The conceptual distinction is useful, but what you actually need day-to-day is a signal for which approach to reach for. Here's a simple framework:

| Situation | What to use |

|---|---|

| Single task, self-contained, model already has the knowledge it needs | Prompt engineering |

| Recurring task where you find yourself re-explaining the same background | Context engineering |

| Output quality degrades over a long conversation | Context engineering (context rot) |

| You're spending more time on the prompt than the task | Context engineering |

| You need to synthesize multiple sources (code + docs + tickets) | Context engineering |

| You want consistent output across multiple sessions or team members | Context engineering |

The key diagnostic question is: am I fixing the instruction, or am I compensating for missing information?

If you've refined a prompt three times and it still feels wrong, the problem is almost certainly the second one. The model is working with incomplete information, and no rewording of the question will fix that.

Here's where most writing on this topic loses the individual developer: it assumes you're building a retrieval pipeline. That's a legitimate context engineering approach for production AI systems at scale. But it's not the starting point for a developer who wants better results from Claude Code or ChatGPT tomorrow morning.

The good news: you can practice context engineering with zero infrastructure. The tools you need are already in your editor.

CLAUDE.md / system prompt files. For Claude Code users, this is the single highest-leverage starting point. A well-designed CLAUDE.md tells the model your stack, your conventions, your architectural decisions, what commands to run to test things, and what patterns to avoid. Cole Medin's open-source context engineering template - which has become a popular reference point in the community - demonstrates how a minimal CLAUDE.md paired with a PRPs/ folder for feature-specific context can fundamentally change the quality of what you get back.

Reusable context blocks. Identify the parts of your context that are relevant across multiple tasks - your data model, your API patterns, your team's naming conventions - and store them as structured markdown files. Load them manually when relevant rather than re-explaining every session.

Task-scoped context assembly. Before running a complex task, spend two minutes assembling the relevant context: the specific files, the relevant docs, the issue reference, the acceptance criteria. This is the manual version of what a RAG pipeline does automatically. It feels slow at first and becomes very fast with practice.

Structured formats for complex tasks. Plain prose context is harder for a model to navigate than structured context. A simple format like XML or structured markdown - with clear sections for architecture, conventions, task details, and constraints - consistently outperforms an equivalent amount of unstructured text.

HiveTrail Mesh streamlines this exact workflow by assembling token-optimized, structured context from Notion pages, local files, and Git repositories - with built-in privacy scanning to filter out what shouldn't be included - and exporting it ready to paste. It's one of the context engineering tools for developers that removes the assembly overhead without requiring any pipeline infrastructure. Doing this manually works, and many developers do it that way. The tradeoff is time. If you're running this kind of context assembly dozens of times a week, automating it pays back quickly.

The qualitative shift developers describe when they start practicing context engineering consistently is notable. It's not that the model suddenly becomes more intelligent. It's that it stops being a stranger to your project.

Freelance fullstack developer Christopher Groß wrote about this on Dev.to from a year of daily Claude Code use:

"When I start working on a new project, the first thing I create is the CLAUDE.md. Not the first component, not the first feature - the context file... After that I save myself from rebuilding that context in every single AI session. I don't explain 'we use Tailwind, not Styled Components' or 'all texts must go through i18n' anymore. It's in the file. Claude reads it, follows it."

The practical difference he describes: asking for a new section on the services page now produces code that uses his design system, follows TypeScript strict mode, and contains no hardcoded strings - without him having to specify any of that. The context file did the work.

This mirrors a broader pattern. Developers who invest in context engineering report a compound effect: the upfront time to structure context is paid back quickly, and returns accelerate over time as the context files mature.

The developer at the beginning of this article wasn't writing bad prompts. They were treating a context problem like an instruction problem - and no amount of prompt refinement fixes that.

The shift to context engineering isn't about learning a new technology. It's about changing the mental model: from "how do I ask this better?" to "what does the model need to know right now?"

Once you make that shift, the improvement in output quality isn't incremental. It's categorical.

Try HiveTrail Mesh beta - context assembly built for developers →

On this page

No. Prompt engineering is a real skill with a genuine use case: getting the best instruction into the context window. What's changing is that it's increasingly understood as one component of a larger practice, not the whole game. The industry didn't abandon web design when UX emerged as a distinct discipline. It recognized that both were needed. The same applies here.

No. RAG is one way to populate a context window with relevant information automatically at runtime. But manually curated context files, structured prompts, and task-scoped assembly are all valid approaches that require no infrastructure. RAG becomes relevant when the scale of information makes manual curation impractical.

Quality and structure, not quantity. More tokens in the context window doesn't mean better output. It can actively hurt performance through context rot. Context engineering is about selecting the right information and presenting it in the right structure. The goal is signal density, not volume.

Absolutely. Context engineering at the individual level is fundamentally about curation and structure, not code. A PM assembling a structured context block from Notion spec pages, issue references, and business constraints before asking an AI to draft a requirements document is doing context engineering. No technical background required.

Because context is different. The session state, what you included, how you structured it, and how much of the conversation history is still in the window - all of these affect output. Inconsistency is usually a context problem, not a model problem.

Master context engineering for developers. Learn the step-by-step workflow to assemble LLM context stacks, avoid context rot, and get better AI outputs.

Read more about Context Engineering for Developers: A Practical Guide (2026)

Master the vocabulary of AI agents with this context engineering glossary. Discover 22 key terms, from context rot to attention budgets, to build better apps.

Read more about The Context Engineering Glossary: 22 Terms for AI Developers

Reduce Claude Code token usage with 9 high-leverage tactics. Learn how to prune CLAUDE.md, optimize the autocompact buffer, and cut context costs in half.

Read more about Claude Code Token Usage: Cut Context Costs in Half