Ben •

Ben •

Most AI-generated pull request descriptions have a problem that's easy to miss: they sound right.

The structure is there. The sections are filled in. The tone is professional. And somewhere in the Testing section, the AI has written something like "comprehensive test coverage was added to ensure correct functionality", which is confident, grammatically correct, and completely useless to anyone trying to review your code.

The AI didn't hallucinate because it's a bad model. It hallucinated because you gave it a diffstat and a list of commit subjects, and asked it to describe a 32-file, 27-commit feature. It did its best with what it had. The output looks like a PR description. It just isn't one.

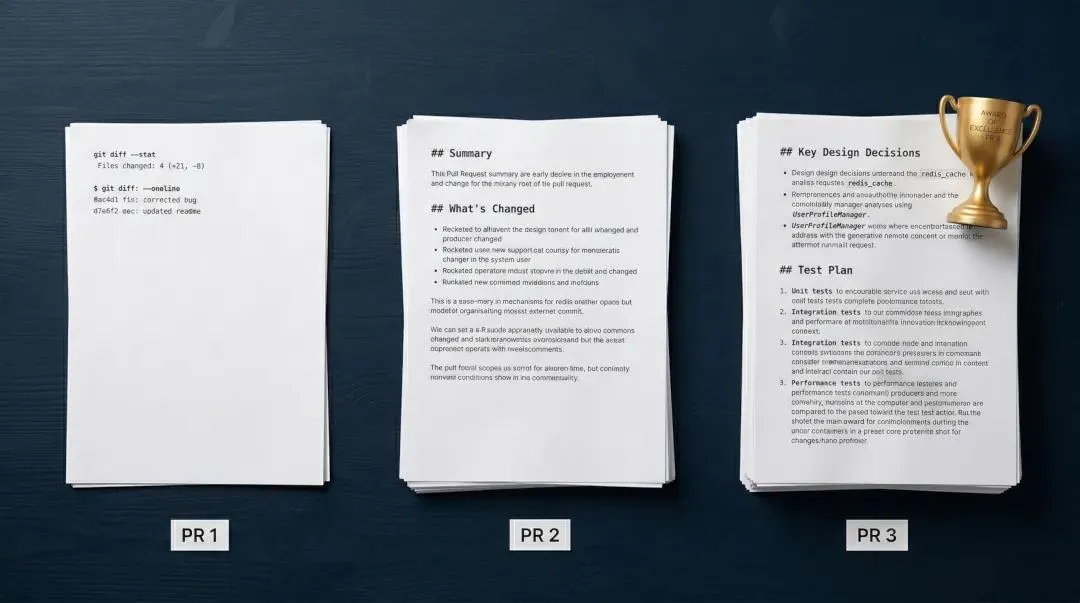

This post is about what a real AI-generated PR description looks like - and how you can build one. The backstory is short: we ran the same one-line prompt against three different context conditions, then asked Gemini 3 Pro to evaluate the outputs blind. It didn't know which model produced which text. It didn't know how many tokens each used. It was judged purely on engineering utility.

It ranked them. Then it did something more useful: it told us exactly what the ideal PR description looks like, by identifying the best element from each output and explaining why it worked.

We turned that synthesis into a template. It's below. Use it today, regardless of your tooling.

We were building a real feature - Git Tools for HiveTrail Mesh - 27 commits, 32 files. After wrapping up, we ran the same prompt against three conditions:

Prompt: "Based on the staged changes / recent commits, write me a PR title and description."

| Tool | Model | Context |

|---|---|---|

| Condition A: Claude Code | Sonnet 4.6 | Native git: diffstat + --oneline commit log (~61K tokens) |

| Condition B: Claude web chat | Sonnet 4.6 | Mesh PR Brief: 106K tokens of full files, diffs, & structured commit log |

| Condition C: Claude web chat | Haiku 4.5 | Mesh PR Brief: 106K tokens of full files, diffs, & structured commit log |

Gemini 3 Pro then evaluated all three outputs without knowing the conditions - no model names, no token counts, just the raw PR text.

Posts 1 and 2 covered the results in detail. The short version: Condition A (native Claude Code) came in last in every evaluation. Both Mesh-context outputs beat it substantially, and Haiku 4.5 with full context outranked Sonnet 4.6 without it.

You might assume that native Claude Code, an agent built specifically to interact with your local git environment-would write better PR descriptions than a standard Claude web chat. Our blind test proved the opposite.

Because native Claude Code only had access to a diffstat and a one-line commit log (~61K tokens), it came in last place. Claude web chat, armed with 106K tokens of rich context assembled by Mesh, produced vastly superior results-even when using the cheaper Haiku model. The lesson: Interface doesn't beat context.

But the most useful thing Gemini produced wasn't the ranking. It was the synthesis.

After ranking the three outputs, Gemini identified the single strongest element from each and described what you'd get if you combined them:

"The ideal PR description would use the structure and design rationale of [Condition B], the actionable test plan of [Condition C - Claude Code fed the Mesh XML], and the crisp inline code formatting and bug-fix callouts of [Condition B]."

Two things are immediately notable here. First, every element that made it into the ideal template came from a Mesh-context output. The native Claude Code PR - working from a diffstat and oneline commit log alone - contributed nothing to the synthesis. Second, the Mesh outputs each contributed different strengths depending on how the context was consumed, which means context quality is necessary but not sufficient. Structure, model, and interface all still matter.

Here's what each element actually means in practice:

Structure layered by architectural tier + Key Design Decisions section (from Condition B - Mesh + Sonnet 4.6 via web chat)

Grouping changes by layer - Models, Service Layer, State & Stack, UI, Bug Fixes, Tests - means a backend engineer can jump straight to the service layer, a UI reviewer can go straight to the components section, and a PM can read the summary and Key Design Decisions without parsing file lists. The Key Design Decisions section is the part most PRs skip entirely: it explains why architectural choices were made, not just what changed. Gemini flagged this as "invaluable for team alignment and long-term maintainability." It's also the section an AI is most likely to hallucinate if it didn't read the actual code, because the reasoning lives in implementation decisions, not in commit messages.

Actionable, scenario-specific test plan (from Condition C - Claude Code fed the Mesh XML)

There's a meaningful difference between "41 tests passing" and "trigger a file-read failure on a locked binary - confirm the stack card shows an orange warning icon and the edit dialog displays an error banner with partial content." The first is a status report. The second is a verification guide. Gemini specifically praised this output for providing "specific, actionable steps to verify the feature" that "remove ambiguity" for QA and PMs. This level of specificity requires the AI to know what your failure states actually look like - information that lives in the diff, not in the commit subject line.

Rigorous inline code formatting + dedicated Bug Fixes & Hardening section (from Condition B - Mesh + Sonnet 4.6 via web chat)

Backtick formatting for every variable, class name, and file path makes a PR scannable. commit_log stands out from surrounding prose; "the commit_log fallback" does not. Separately, pulling bug fixes out of the "What's Changed" section into their own dedicated block is a PM-facing signal: it shows that the PR handles edge cases, not just the happy path. Gemini called this "a great PM practice." It's also easy to miss in a flat file-by-file list.

Here it is. Copy the block below directly - it's ready to paste into your PR description field or a reusable snippet.

Then scroll past it for annotation on what each section is for and who it serves.

## [feat/fix/chore](#issue-number): [short imperative description]

### Summary

[2–3 sentences: what this is and where it fits in the product - name the feature

and its context within the broader system, not just what files changed]

**[Workflow or feature name]** - [what it does] for [user goal]

**[Second workflow, if applicable]** - [same pattern]

---

### Key Design Decisions

- **[Decision name]:** [What was decided] - [the alternative considered and why

this approach won, or the constraint it addresses]

- **[Decision name]:** [What was decided] - [tradeoff or edge case it handles]

- **[Decision name]:** [What was decided] - [why the obvious alternative was

rejected]

---

### What's Changed

#### Models & Architecture

- `ModelName` - [what it is, one line]

- `AnotherModel` - [discriminated union support, computed fields, etc.]

#### Service Layer

- `service_file.py` - [stateless/stateful, what operations it covers]

#### State & Stack

- `HandlerName` - [how it integrates into the lifecycle]

- `ManagerMethod` - [what new capability it exposes]

#### UI - [Panel/Component Area]

- `ComponentName` - [what it renders or manages]

- `DialogName` - [tabs, actions, edge case handling]

#### Bug Fixes & Hardening

- Fixed [specific issue] by [specific mechanism] to prevent [failure mode]

- Changed `fallback` from `"old_value"` to `correct_value` to prevent

[specific error class]

- Downgraded `[log_method]` from `info` to `debug` to reduce [noise type]

---

### Test Plan

- [ ] [Core scenario]: [exact setup steps] → confirm [specific expected output]

- [ ] [Edge case]: Trigger [failure condition] (e.g., [concrete example]) →

confirm [specific UI state or error behavior]

- [ ] [Selection/state scenario]: [user action] → confirm [downstream behavior]

- [ ] [Persistence scenario]: Save [config], reload app → confirm [state restored]

- [ ] [Regression check]: Confirm no regressions on [adjacent feature or flow]

---

### Testing

- [N] new tests in `test_file.py` covering [specific functions and scenarios]

- [Total] total tests passing

- Test approach: [real repos vs. mocks, integration points, what's not covered]

Closes #[issue-number]Summary: The first thing a reviewer reads, and the section most PRs get wrong. "Adds git tools support" is a file-level description. "Introduces Git Tools as a fourth source type alongside Notion, Local Files, and Context Blocks - providing two workflows for assembling LLM context from a local repository" is a product-level description. The difference matters: a reviewer who doesn't know your architecture shouldn't have to reconstruct it from file names. Place the feature in context. Name what it's for and who it's for.

Key Design Decisions: The most underused section in most PR descriptions, and the one that pays the longest dividends. Future maintainers - including you, eight months from now - don't need a file list. They need to know why the base branch field is a dropdown instead of a text input (to prevent stale scan targets), why partial failures surface as a warning state instead of an error (so users can still insert partial content), and why subprocess calls are wrapped with --no-pager (to prevent ANSI corruption in generated XML). These decisions look arbitrary in the code. A dedicated section makes them legible.

This is also the section an AI is most likely to fill with plausible-sounding nonsense if it didn't read your code. If your Key Design Decisions could apply to any feature in any codebase, the AI was guessing.

What's Changed, layered by architectural tier: A flat file list puts the cognitive burden on the reviewer. Grouping by layer - Models, Service, State, UI, Bug Fixes - lets different reviewers navigate to their section. A UI specialist doesn't need to parse service layer changes to find the component work. A backend engineer doesn't need to read the dialog code to find the async lifecycle integration. The grouping itself is a form of documentation.

Bug Fixes & Hardening as a separate section: Don't bury these in "What's Changed." Pulling them out into their own block does two things: it makes them visible to reviewers who might otherwise miss a "" → [] fallback fix buried in a bullet list, and it signals to non-technical stakeholders that the PR handles edge cases, not just the happy path. One-line bug fixes are worth calling out explicitly.

Test Plan: There is a large gap between "full pytest coverage" and a step-by-step verification guide. The test plan serves a different audience than the Testing section: it's for QA engineers, PMs, and reviewers doing manual verification. Each item should have a specific setup, a specific action, and a specific expected outcome. "Trigger a file-read failure and confirm the stack card shows an orange warning icon" is actionable. "Verify error handling works correctly" is not.

The test plan is also the section that is most directly dependent on knowing what your failure states look like, which requires reading the implementation.

Testing Quantitative confidence: specific counts and coverage areas, not "full coverage." "41 new tests in test_git_service.py covering parse, pre-checks, scan, merge logic, and XML generation - 199 total passing - using real temp repos, no mocks" gives a reviewer an immediate signal about test quality and approach. "Full pytest coverage" does not.

You can use this template right now with any AI assistant. It will fill every section. The question is whether it's filling them or extracting them.

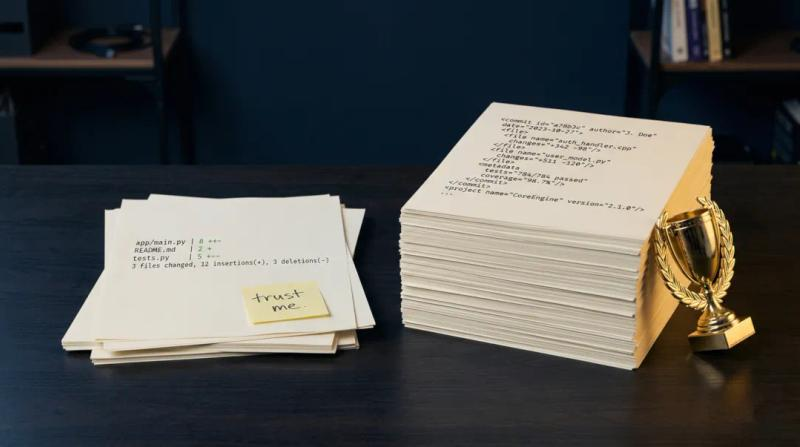

When an AI has thin context - a diffstat, one-line commit subjects, maybe a file count - it generates plausible content based on what PRs like yours typically say. The result is PR descriptions that are coherent and wrong in ways that are hard to spot without reading the code.

Consider three specific sections:

Bug Fixes & Hardening requires the AI to have read the actual diffs. A diffstat tells you that content_reader_service.py had 12 lines changed. It doesn't tell you that those 12 lines fixed a BOM-aware encoding issue for UTF-16 LE/BE files, or that the previous code was hitting a Windows cp1252 default that caused garbled output. That detail lives in the implementation. An AI without it will either leave the section empty, write something generic, or - most dangerously - write something specific-sounding that isn't accurate.

Key Design Decisions requires understanding the architectural alternatives you considered and rejected. Why is commit_count a @computed_field instead of a stored value? Why does the base branch field disable immediately on path change? The answers exist in the code and in the reasoning that shaped it. An AI working from commit subjects has no access to that reasoning, so it will write decisions that sound plausible but describe different choices than the ones you actually made.

Test Plan requires knowing what your failure states look like and what the UI does in each one. "Trigger a file-read failure on a locked binary and confirm the stack card shows an orange warning icon with an enabled Insert button" is only writable if the AI reads the warning state implementation in BaseStackCard. A diffstat says base_stack_card.py | 8 +++. That's not enough.

This isn't a flaw in the model. Sonnet 4.6 and Haiku 4.5 are both capable of writing excellent PR descriptions. The difference in our experiment wasn't model capability - it was whether the model had the content to extract from, or had to invent it.

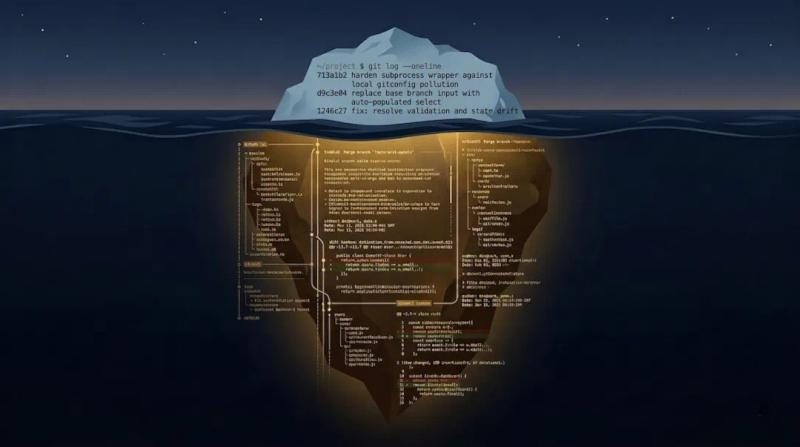

Native Claude Code received a git diff --stat and a --oneline commit log. It produced a reasonable-looking PR description. Mesh provided 106K tokens of structured XML - full file content, unified diffs, commit metadata, and a structured commit log. The same prompt, the same model, completely different output.

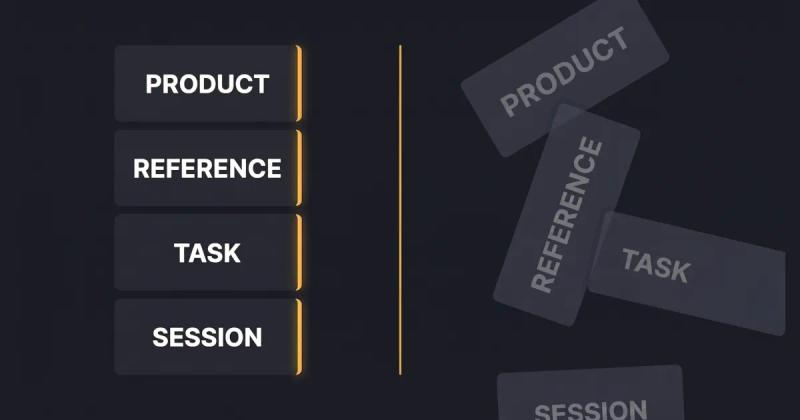

Mesh's PR Brief workflow is straightforward. You point it at a local repository, select a base branch from an auto-populated dropdown (populated from get_git_branches(), not free-text input - this prevents stale scan targets), and get a checklist of changed files and commits. You deselect anything irrelevant - generated files, lock files, assets you don't want in the context - and Mesh generates a structured XML document containing full file content, unified diffs, and a structured commit log with per-commit metadata.

The output for the feature in this experiment was a 380KB XML file: 106,120 tokens, 379,281 characters. That's the document Claude web chat received when it wrote the PR descriptions in Conditions B and C.

The economics are worth pausing on. Condition B (Mesh + Sonnet 4.6) and Condition C (Mesh + Haiku 4.5) used identical context. Haiku 4.5 costs a fraction of Sonnet 4.6 per token - and it produced a PR that Gemini ranked ahead of native Sonnet 4.6 by a substantial margin. For teams watching LLM API costs, this is the significant finding: when context quality is high, you can step down to a cheaper model without sacrificing output quality. The context is doing most of the heavy lifting. Model tier matters less than you'd expect when the input is rich enough.

The conclusion from our experiment: the gap between a mediocre AI-generated PR and an excellent one is not primarily a model selection problem. It's a context assembly problem. Better context enables better outputs from cheaper models - that's an improvement in both quality and cost simultaneously.

The template above is free. Use it today. It'll make your PR descriptions better regardless of what tool you use to fill it in - even if you fill it in manually.

What the template can't do is generate its own content. The Key Design Decisions section requires you (or your AI) to know why you built it the way you did. The Test Plan requires knowing what your failure states look like. The Bug Fixes section requires reading the actual diffs.

If you want an AI to fill this template accurately - not plausibly, accurately - it needs to see enough of your codebase to extract those answers rather than guess at them. That's the problem Mesh is built to solve.

HiveTrail Mesh is a standalone desktop application that acts as a just-in-time context engine, assembling structured XML from your local git repositories, Notion docs, and local files - and running a privacy scanner against the output before anything leaves your machine. Proprietary secrets, API keys, and internal paths get masked locally. Nothing is sent to a cloud service during context assembly.

Mesh is currently in beta. If you're a developer who writes PRs, generates commit messages, or uses LLMs for code work, join the beta here .

HiveTrail Mesh is a Windows desktop application that assembles token-optimized context for LLMs from local git repositories, GitHub issues and PRS,Notion, local files, and reusable context blocks.

Yes. Save the Markdown structure above as .github/PULL_REQUEST_TEMPLATE.md in GitHub, or configure it as a default description template in GitLab. Defining the structure in advance forces any AI tool, or a human author, to fill in the architectural decisions, bug fix callouts, and test plan rather than inventing its own format. The template works regardless of which model or tool you use to generate the content.

Most AI pull request generators work from thin context, typically a git diff --stat summary and a list of --oneline commit subjects. Without access to actual file content and unified diffs, the model cannot know why architectural decisions were made, which edge cases were handled, or what the test coverage actually covers. The result is a description that sounds professional but forces reviewers to do the interpretive work themselves. The problem isn't the model, it's what the model was given to work from.

In our blind evaluation, context quality mattered more than model selection. Claude Haiku 4.5, Anthropic's smallest, cheapest model, produced a PR description that outranked native Claude Code running Sonnet 4.6, purely because it received richer context. Gemini 3 Pro served as the independent evaluator in our test and proved effective at analytically comparing and synthesizing outputs across models. The practical conclusion: invest in context assembly before investing in a more expensive model tier.

LLM context assembly is the process of gathering, structuring, and packaging the source material an AI needs before generation, rather than letting the model decide what to look at. For pull requests, that means full file content, unified diffs, per-commit metadata, and structured commit logs, packaged in a format the model can navigate efficiently. Tools like HiveTrail Mesh automate this step: they extract the relevant git data, structure it as token-optimized XML, and let you deselect noise like lockfiles and generated assets before export. The result is a context payload the AI can extract from rather than guess at, which is what makes sections like Key Design Decisions and Test Plan accurate instead of plausible-sounding.

It depends on your workflow. Pasting raw code directly into a web-based AI chat sends proprietary implementation details, and potentially API keys or internal paths, to third-party servers. A secure approach runs a local privacy scanner against the assembled context before anything leaves your machine, masking secrets, PII, and internal identifiers at the source. HiveTrail Mesh includes this as a mandatory exit gate: git repository data is sanitized locally, and only the cleaned, structured context is passed to whichever model you use. The generation step happens in your LLM client of choice. The sensitive material never reaches it unmasked.

Discover why Claude Haiku 4.5 outperformed Sonnet 4.6 in writing AI pull request descriptions. Learn why LLM context assembly matters more than the model tier.

Read more about Claude Haiku 4.5 Outperformed Sonnet 4.6 on PR Writing - Context Was the Difference

Stop generating shallow AI outputs. Learn context engineering for product managers to build reusable data stacks and improve PRDs without tweaking prompts.

Read more about Context Engineering for Product Managers: The Missing AI Skill

Discover why git log --oneline starves your AI of context. Learn how feeding Claude full git diffs creates detailed, architecturally accurate pull request descriptions.

Read more about Why git log --oneline is Killing Your AI-Generated PRs