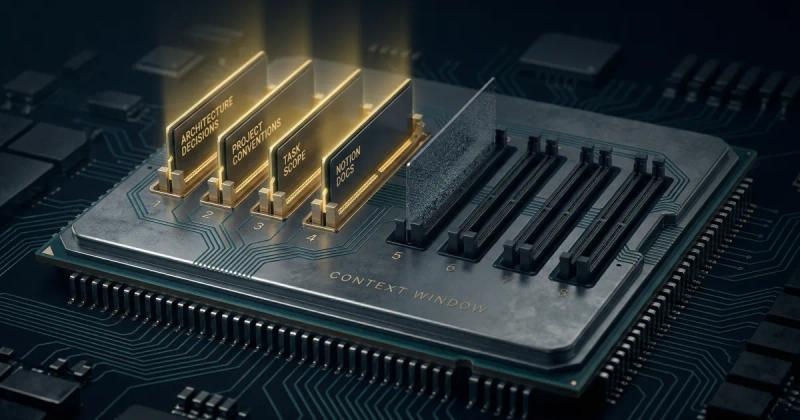

Context Engineering vs Prompt Engineering: What the Shift Means for Developers (2026)

Stop rewriting prompts. Learn when to use context engineering vs prompt engineering to optimize LLM context quality without complex RAG pipelines.

Read more about Context Engineering vs Prompt Engineering: What the Shift Means for Developers (2026)

Why Your AI Prompts Keep Hitting LLM Context Window Limits and How to Right-Size Them.

Hitting token limits mid-task is frustrating, costly, and avoidable. Here is why it happens across ChatGPT, Claude, and Gemini and a practical framework for right-sizing your context every time.

Read more about Why Your AI Prompts Keep Hitting LLM Context Window Limits and How to Right-Size Them.

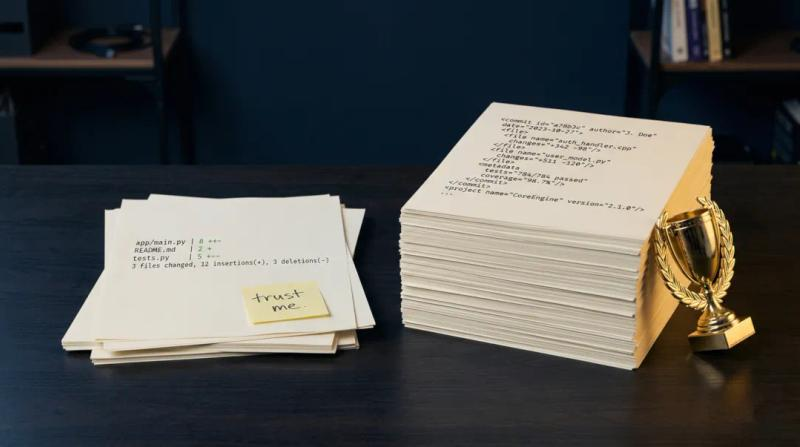

Claude Haiku 4.5 Outperformed Sonnet 4.6 on PR Writing - Context Was the Difference

Discover why Claude Haiku 4.5 outperformed Sonnet 4.6 in writing AI pull request descriptions. Learn why LLM context assembly matters more than the model tier.

Read more about Claude Haiku 4.5 Outperformed Sonnet 4.6 on PR Writing - Context Was the Difference

The Hidden Risk of Pasting Code into LLMs: How to Prevent API Key and PII Leaks

Every time you paste code into ChatGPT, you might be leaking API keys, PII, and trade secrets. Here's what the data shows, and how to stop it before it happens.

Read more about The Hidden Risk of Pasting Code into LLMs: How to Prevent API Key and PII Leaks