Ben •

Ben •

I build HiveTrail Mesh , a context assembly tool for LLMs. I use Claude Code, as well as multiple other LLMs, daily. And recently, I asked it to write a pull request description for a feature I'd just finished - 27 commits across 32 files, several days of real work.

The output was competent. It had a title, a summary, and a list of changed files. But reading it back, something felt off. I knew what was in those commits. The architectural decisions, the edge cases I'd hunted down, the bug fixes that weren't obvious from the file names. None of it was there. The PR read like someone had skimmed the index of a book and written a summary without reading the chapters.

So I did what any developer building a context tool probably would: I went looking for why.

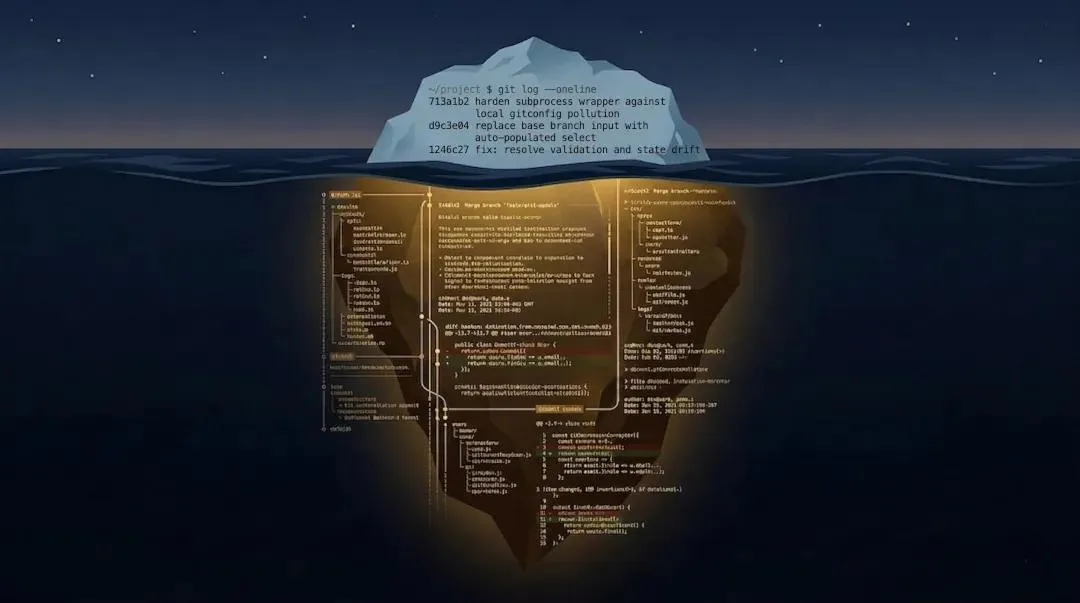

When you ask Claude Code to write a PR description, it runs two git commands:

git log main..HEAD --oneline

git diff main...HEAD --statThe --oneline flag returns one line per commit: the abbreviated SHA hash and the subject line. That's it. No commit body, no co-author notes, no extended description you carefully wrote.

The --stat flag returns a diffstat - a summary of which files changed and how many lines were added or removed. Again, no actual content. No diffs, no file contents, no context about what changed or why.

So the model is working from something like:

7c22302 fix(git-tools): harden subprocess wrapper against local gitconfig pollution

6b5fd96 feat(git-tools): replace base branch input with auto-populated select

1246c27 fix(git-tools): resolve validation, state drift, and arch leaks

...Plus a file change summary. For a 27-commit feature branch, that's the equivalent of asking someone to explain a film by reading the chapter titles on a DVD menu.

The model is good enough to produce something coherent from this - but coherent isn't the same as accurate, and it certainly isn't the same as useful.

Before getting to the fix, it's worth being specific about what's missing.

A PR description serves two audiences with different needs. Developers reviewing the code want to know which layers of the codebase were touched, what edge cases were handled, and why certain decisions were made the way they were. Product managers and QA engineers want to understand user impact, workflow changes, and how to verify the feature works.

When the model only has commit subject lines and a file list, it can infer what changed from the file names. It cannot infer:

These are exactly the things that separate a PR description that's useful from one that's just technically accurate.

Before reaching for a different tool, most developers will try the obvious thing first: write a better prompt. And it's a fair instinct - you can absolutely instruct Claude Code to run more thorough git commands, request full diff content, and follow a specific PR structure. Something like "run git log with full commit bodies, fetch the complete diff for each changed file, then write a PR description organized by architectural layer with a key design decisions section" will produce meaningfully better output than the default.

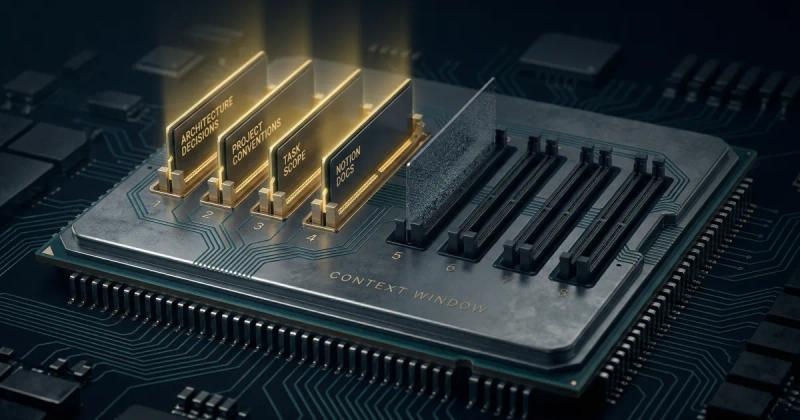

But there are real costs to this approach worth understanding. The most immediate is token burn - asking Claude Code to fetch full diffs and structured commit logs for a large branch will consume significantly more context than the default --outline summary approach, which adds up quickly if you're on a metered plan. The less obvious problem is consistency. Claude Code operates within a conversation context that degrades over a long session: early instructions get compressed, memory gets summarised, and the careful prompt you wrote at the start of a session may not be fully honoured three hours later when you finally hit merge. You're also now maintaining a prompt, not just a workflow.

For this test, I deliberately used the simplest possible prompt - "based on the staged changes / recent commits, write me a PR title and description" - across all three methods. The goal was to measure what each approach produces from its own capabilities, not what it produces when coached. Prompt engineering can close some of the gap, but it can't change what data the model is actually working from.

The underlying problem is simple: the model is summarising a summary. The fix is to give it the actual data.

Here's what that looks like manually. Before asking your LLM to write the PR, run this yourself and include the output in your prompt:

Full commit log with bodies:

git log main..HEAD --pretty=format:"%H%n%an%n%ad%n%s%n%b%n---"Actual file diffs:

git diff main...HEADOr per-commit diffs if the full diff is too large:

git log main..HEAD --patchA word of warning on token volume: for a large feature branch, on a 27-commit, 32-file branch produced around 106,000 tokens in my test - roughly 379KB of XML-structured content. That's well beyond what you'd paste into a chat window, and it approaches or exceeds the context limits of many models.

git diff main...HEADThis is where you need to be selective. For smaller branches - a few commits, a handful of files - pasting the full diff directly works fine. For larger branches, you have options:

Either way, the quality difference is immediate. When I ran the same prompt - "based on the staged changes / recent commits, write me a PR title and description" - with the full structured context versus Claude Code's summary approach, the outputs were not comparable. The full-context version knew about BOM-aware file encoding, NiceGUI deleted-slot errors, and the decision to use @computed_field to eliminate state drift. The summary version knew that git_service.py was a new file.

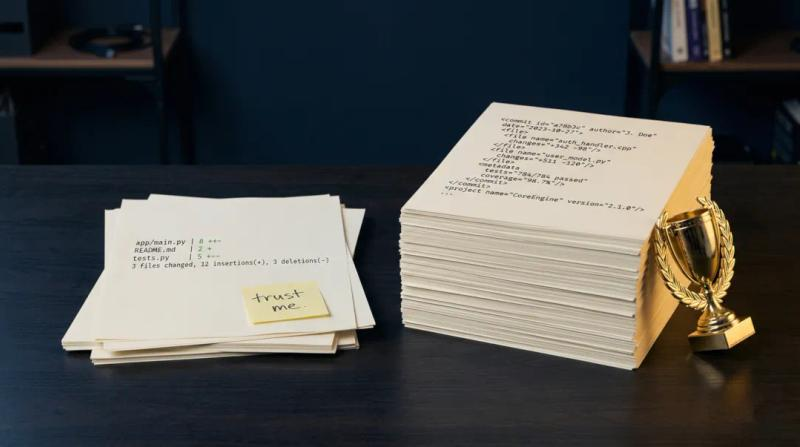

I ran three versions of the same PR description for the same feature branch:

Version 1 - Claude Code (Sonnet 4.6) Working from --oneline and --stat only. Produced a competent, file-oriented description with a good "Key design decisions" section. Flat formatting, no inline code styling, read like a wall of text.

Version 2 - Claude web chat (Sonnet 4.6) + full structured context. The same model, but fed the complete PR Brief XML (106k tokens of structured diffs, commit metadata, and file content). Layered by architectural section, included product context, named specific edge cases, and why they were handled the way they were, referenced exact test counts.

Version 3 - Claude web chat (Haiku 4.5) + full structured context. The cheapest Claude model, same full context. Produced a description nearly as strong as Version 2, with better structured sections for testing guidance and explicit "Key Design Decisions."

I asked Gemini 3 Pro to evaluate all three as a neutral third party, framed as a senior developer and product manager. The ranking: Version 2 first, Version 3 second, Version 1 third.

The conclusion that stood out: Haiku 4.5 with full context outperformed Sonnet 4.6 with shallow context. The model tier mattered less than the context quality.

Gemini's summary of the gap was pointed: Version 1 "forces the reviewer to do the heavy lifting." Versions 2 and 3 "treat the PR description as living documentation."

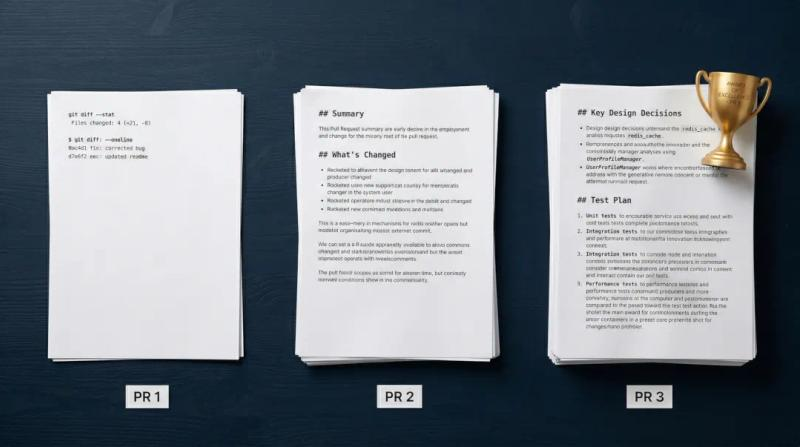

Google Gemini 3 Pro’s comparison table:

| PR Text | Primary Strength | Primary Weakness | Best Audience |

|---|---|---|---|

| PR 1 (Claude Code) | Exceptional, step-by-step test plan. | Flat formatting makes it hard to scan. Lacks inline code styling. | QA and Product Managers. |

| PR 2 (Sonnet + Mesh) | Strongest structural hierarchy and includes "Key Design Decisions." | Testing section is a status report, not an actionable guide. | Senior Developers and Architects. |

| PR 3 (Haiku + Mesh) | Excellent markdown formatting (inline backticks) and explicit bug-fix callouts. | Lacks the deeper architectural "why" found in PR 2. | General Code Reviewers. |

Version 1: Claude Code with Sonnet 4.6:

Title:

feat(#282): add Git Tools source type (Commit Brief + PR Brief)

---

Description:

Summary

Introduces Git Tools as a new source type in the Stack, enabling users to generate token-optimized LLM context from local git repositories.

Two item types are supported:

- Commit Brief - scans a single commit and produces a structured XML summary of changed files and diff content

- PR Brief - scans a branch against a base branch, producing a structured XML summary with per-commit metadata and a selectable file/commit

checklist

What's included

- services/git_service.py - new service with full subprocess wrapper...

- models/stack/git_tools.py - Pydantic models for CommitBriefStackItem...

- core/state/sources/git_tools.py + core/state/stack/handlers/git_tools.py...

- components/common/forms/git_tools/ - CommitBriefForm, PRBriefForm...

- components/sections/stack/dialogs/edit_commit_brief.py + edit_pr_brief.py - edit dialogs with...

- components/sections/source/panels/git_tools/ - source panel with auto-populated base branch select ...

- Full pytest coverage in tests/services/test_git_service.py

Key design decisions

- Base branch is auto-populated from the repo rather than free-text input...

- Partial generation failures surface as a warning state...

- Commit log is stored as structured...

- subprocess calls are wrapped to suppress local...

- Follows the existing async stack lifecycle...Version 2: Claude Sonnet 4.6 chat with Mesh:

**feat(#282): Add Git Tools source type (Commit Brief + PR Brief)**

---

## Summary

Introduces Git Tools as a fourth source type in HiveTrail Mesh, alongside Notion, Local Files, and Context Blocks. Provides two workflows for assembling LLM context from a git repository:

- **Commit Brief** - scans uncommitted changes (staged/unstaged/untracked), assembles file content + unified diffs against HEAD for commit message generation

- **PR Brief** - scans branch-wide changes vs a base branch, includes file content, diffs, structured commit log, and uncommitted change warnings for PR description generation

---

## What's Changed

**Models & Architecture**

- New `GitChangedFile`, `GitCommitEntry`, ... models with Pydantic...

- `PRBriefStackItem.commit_count` as a `@computed_field` to eliminate...

- `warning` status added to `StackItemBaseModel`...

- Git item types registered in `StackItemUnion`...

**Service Layer**

- New `git_service.py` - stateless subprocess service covering...

- Hardened `_run_git` with... to prevent ANSI corruption and pager hangs

- BOM-aware file reading (UTF-16 LE/BE) in...

- `GitResult` dataclass for structured content...

- `generate_git_content` produces structured... XML nodes with ... CDATA string

**State & Stack**

- `GitToolsSource` bridge in... wrapping all git service calls

- `GitToolsHandler` integrated into the standard async stack lifecycle...

- `StackManager.update_git_item`, ... extended for git types

- Stack persistence (`StackPersistence`) handles ... in save/load

- Full facade coverage on...

**UI - Source Panel**

- `GitToolsPanel` with ..., following ... conventions

- `CommitBriefForm` and ... forms with path selector, scan button...

- `FileChecklist` and ... reusable components with select/deselect all, .... with local timezone formatting

- Base Branch field auto-populated from ... disables immediately on path change to prevent stale state

- Uncommitted changes warning banner in...

- Files/Commits sections hidden until...

**UI - Stack**

- `GitToolsStackCard` with type-specific... confirmed Reload action

- `EditCommitBriefDialog` and ... with Settings/Content tabs, ... banner for partial failures

- Insert action enabled for ...

- Edit routing wired in ...

**Bug Fixes & Hardening**

- `notify` called before ... to prevent NiceGUI ... errors

- Stale scan ... on path change

- `commit_log` fallback ... prevent Pydantic ...

- Output manager processing logs ...

**Tests**

- 41 new tests in ... covering parse, pre-checks, scan, ... and PR XML generation (199 total passing)Version 3: Claude Haiku 4.5 chat with Mesh:

**feat(#282): complete Git Tools integration-forms, dialogs, stack cards, and content generation**

---

# PR Description

## Overview

This PR completes the Git Tools feature (Commit Brief and PR Brief) end-to-end, integrating UI components, edit dialogs, stack management, and async content generation. The feature is now wired into the source panel, stack lifecycle, and preset persistence, with full test coverage for the git_service module.

## What's Included

### Forms & Components

- **CommitBriefForm** ... forms with path selection, branch auto-population, file/commit checklists, and rescan workflows

- **FileChecklist** ...Reusable list components with ... dialogs

- **GitToolsStackCard**: Stack ... confirmation

- **EditCommitBriefDialog** ... dialogs with Settings/Content tabs, ... display

### State & Architecture

- **GitToolsSource** (`core/.../git_tools.py`): Domain bridge wrapping ... asyncio threading

- **GitToolsHandler** (`core/.../git_tools.py`): Pre-generated ... (no JIT reads)

- **git_service.py**: Stateless subprocess service with ... merge logic

- **Stack persistence** updated to serialize/deserialize ...

- **StateManager facade** ... git-specific methods ...

### UI Integration

- Git Tools added to source panel...

- Stack card factory routes ...

- Stack component instantiates both ...

- BaseStackCard updated to handle ... enabled Insert button

- Output manager log messages ...

### Content Generation

- **generate_git_content()**: Async XML generator with BOM-aware file reading...

- Returns **GitResult** dataclass with ...

- Partial success mode: items transition to `warning` state ...

### Data Models

- **GitChangedFile**, ...

- **ScanResult**, ... dataclasses

- FileStatus ... enums

- Pydantic `@computed_field` for ...

### Testing

- 41 new git_service tests covering:

- _parse_name_status with ...

- Pre-checks (is_repo, ...)

- scan_commit_changes with ... states

- scan_pr_changes with ... flag

- apply_default_checks (...)

- merge_scan_with_saved (preserve user ...)

- parse_commit_log and ...

- generate_git_content integration with ...

## Key Design Decisions

1. **Pre-generated content model**: Git items store content at ...

2. **Graceful partial success**: When some files fail ... insert partial context.

3. **Clean Architecture enforcement**: GitToolsSource wraps ... No direct service imports.

4. **Concurrent diff fetching**: Diffs fetched in parallel with ... avoid resource exhaustion.

5. **Merge logic for rescan**: When users rescan, ... across repository state changes.

6. **BOM-aware encoding detection**: UTF-16 files (with BOM) and UTF-8 with BOM are decoded correctly; Windows cp1252 default avoided.

## Testing Guidance

- All 199 tests pass (including 41 new ... tests)

- git_service tests use ...

- Integration tested via forms/dialogs in the app

- Warning state rendering tested in ...

---

**Closes #282**Constructing that full context manually - running the right git commands, handling encoding issues, structuring the output so the model can navigate the 106k-token PR Brief without losing the thread - is non-trivial. For a one-off experiment, it's fine. As a repeatable workflow before every PR, it's friction most developers won't sustain.

This is exactly the problem I built HiveTrail Mesh to solve. The PR Brief source type runs the git commands, structures the output as navigable XML with per-commit nodes, handles BOM-aware encoding, and lets you select which files and commits to include before the context gets assembled. The output goes to your clipboard, ready to paste into whichever LLM you want to use.

If you want to try it, Mesh is in limited beta and free during the beta period . But honestly, if you want to test the manual approach first on your next branch, the git commands above will get you there.

If you want to try the full-context approach on your next branch before merging, these are the three commands worth knowing:

Full commit log with bodies (not just subject lines):

bash

git log main..HEAD --pretty=format:"%H%n%s%n%b%n---"Complete diff across the branch:

bash

git diff main...HEADPer-commit diffs with context (useful for smaller branches):

bash

git log main..HEAD --patchA practical note on scope: for a large feature branch, the full diff will be large - potentially 100k+ tokens. Before feeding it to a model, skim the file list and drop binaries, generated files, and lockfile changes. What remains is usually 20–40% smaller and significantly more useful to the model.

If you'd rather not run and filter these manually every time, this is the workflow HiveTrail Mesh automates - structured XML output, file selection, and token count before you export.

Claude Code isn't doing something wrong. It's making a reasonable tradeoff - fast, low-cost, good enough for most cases. The --oneline approach keeps the token cost down and the response time fast. For a quick commit message or a small fix, it's fine.

But for complex feature branches where the PR description is going to be read by your team, reviewed by senior engineers, and live in your repository history for years, it's worth spending an extra 30 seconds to give the model the full picture.

The quality of your AI output is constrained by the quality of the context you provide. For PR descriptions, the full diff is the context. Everything else is a summary of a summary.

[Amit] builds HiveTrail Mesh, a context assembly tool for developers working with LLMs. If you found this useful, the beta is open - link.

On this page

By default, AI coding assistants like Claude Code generate pull requests using git log --oneline and git diff --stat. This only feeds the model commit subject lines and a basic summary of changed files. Because the model cannot see the actual code changes, it fails to capture critical context like architectural decisions, hidden bug fixes, and edge case handling.

To generate high-quality PR descriptions, you need to provide the AI with your full commit log and actual file diffs. Use git log main..HEAD --pretty=format:"%H%n%s%n%b%n---" to extract full commit bodies, and run git diff main...HEAD to get the complete branch diff. For smaller features, git log main..HEAD --patch is also highly effective at providing per-commit context.

It can, especially for large feature branches. A branch with 27 commits across 32 files can easily produce over 100,000 tokens of context. To manage token limits and costs, filter out lockfiles, binaries, and generated files before sending the diff to your model, or leverage an LLM with a massive context window like Gemini Pro.

Not necessarily. The quality of your context matters more than the model tier. Tests show that a faster, cheaper model like Claude Haiku 4.5, when provided with structured full-diff context, will consistently outperform a premium model like Sonnet 4.6 that is only fed a shallow --oneline summary.

Instead of manually running terminal commands and filtering out noisy files, you can use a context assembly tool like HiveTrail Mesh . The Mesh PR Brief feature automates this workflow by extracting the right git data, handling file encoding, and structuring the diffs and commit logs into clean, navigable XML ready for any LLM.

Stop rewriting prompts. Learn when to use context engineering vs prompt engineering to optimize LLM context quality without complex RAG pipelines.

Read more about Context Engineering vs Prompt Engineering: What the Shift Means for Developers (2026)

Discover why Claude Haiku 4.5 outperformed Sonnet 4.6 in writing AI pull request descriptions. Learn why LLM context assembly matters more than the model tier.

Read more about Claude Haiku 4.5 Outperformed Sonnet 4.6 on PR Writing - Context Was the Difference

We had Gemini blind-judge three Claude-generated pull requests. Here is the exact AI pull request template it built, and why rich code context is essential.

Read more about We Had Gemini Blind-Judge Three Claude-Generated Pull Requests. Here's the Template It Built.