Ben •

Ben •

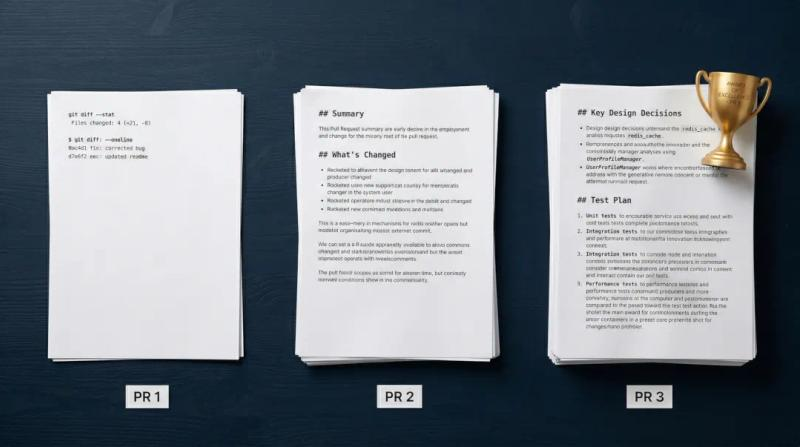

I ran the same prompt on three different setups and had Gemini 3 Pro evaluate the results blind.

The setup using Claude Haiku 4.5 - Anthropic's smallest, cheapest model - produced a better pull request description than Claude Code running Sonnet 4.6.

Before I explain why, a transparency note: to keep this objective, I didn't grade these myself. I handed all three outputs to Gemini 3 Pro and asked it to evaluate them from the perspective of a senior developer and product manager. I agree entirely with its verdict.

The reason Haiku won has nothing to do with Haiku being a better model than Sonnet. It isn't. The reason is that Haiku was given something Sonnet wasn't: the actual evidence.

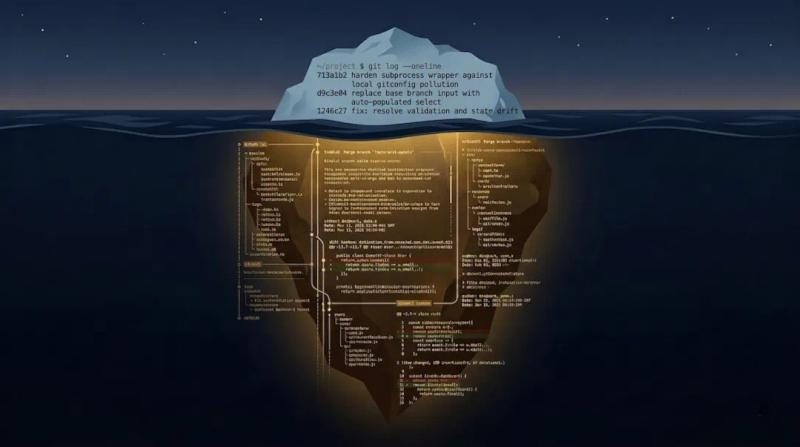

When I asked Claude Code (Sonnet 4.6) to write a PR title and description for a completed feature branch, it ran two commands:

git log main..HEAD --oneline

git diff main...HEAD --statIf you're not familiar with these flags: --oneline returns the abbreviated commit SHA and the subject line of each commit message. That's it - no body, no diff, no file content. --stat returns a summary of which files changed and how many lines were added or removed. Also no actual content.

The result was a 61K token session that cost $0.12 and completed in about 25 seconds. Three entries in the session log. Claude Code was fast, cheap, and working from a diffstat.

Now here's what the Haiku session saw: a 380KB structured XML file containing the full content of every changed file, unified diffs for every file, per-commit metadata with author and timestamp, a structured commit log, and uncommitted change warnings. 106,120 tokens. The same feature branch, assembled into a document designed to give a model everything it needs to reason about the change.

The difference isn't token count. It's that one setup asked the model to reconstruct what happened from a summary. The other gave it the primary source material.

The gap in output quality shows up most clearly in three specific places.

Product context. The Claude Code output refers to "the Stack" without explanation. A developer already working in this codebase knows what that means. A reviewer who doesn't is left to guess. The Haiku output opens with: "Introduces Git Tools as a fourth source type in HiveTrail Mesh, alongside Notion, Local Files, and Context Blocks." Same fact, but the second version works for anyone reading the PR - now or six months from now in the commit history.

Workflow accuracy. The Claude Code output describes the Commit Brief feature as something that "scans a single commit." That's not quite right. Commit Brief scans uncommitted changes - staged, unstaged, and untracked files - to help you write a commit message for work you haven't committed yet. It's a subtle distinction, but it's exactly the kind of thing a model gets wrong when it's reasoning from a commit log rather than reading the actual implementation. Haiku got it right because it read the implementation. A diffstat will never surface that kind of semantic precision - and neither will any model reasoning from one.

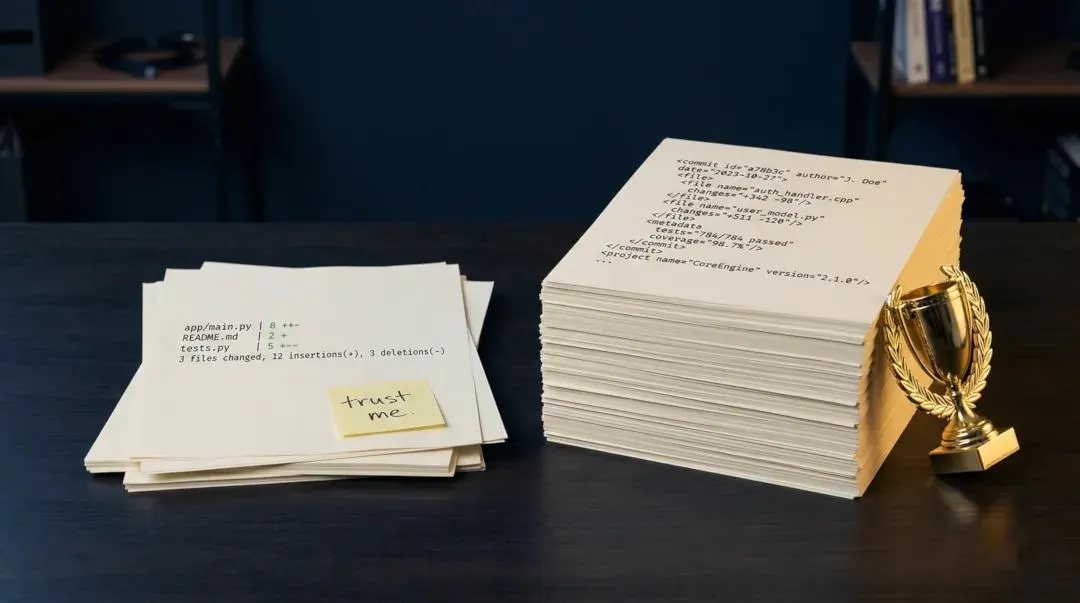

Test evidence. The Claude Code output states: "Full pytest coverage in tests/services/test_git_service.py." The Haiku output states: "41 new tests in test_git_service.py covering parsing, pre-checks, scan, default checks, save-merging, and XML generation. 199 total tests passing." One is an assertion. The other is evidence. When Gemini evaluated these, it called the Claude Code version a "trust me" statement and said the Haiku version "brings the receipts." That's not a model intelligence gap. Claude Code just didn't see the test file.

Gemini evaluated three outputs in total: Claude Code + Sonnet 4.6, the XML context + Haiku 4.5, and the XML context + Sonnet 4.6. The full ranking:

Sonnet with the full context ranked first, which is expected. But Haiku with the full context ranked second - above Sonnet without it. The model tier mattered less than the context quality.

Here's where the economics get interesting. Haiku is dramatically cheaper than Sonnet. Combined with prompt caching absorbing most of the input cost, the Haiku generation cost effectively nothing. You get a senior-level PR description for pennies - not by paying for a smarter model, but by changing what you feed it.

Claude Code is a general-purpose coding assistant optimizing for speed, cost, and interactivity across hundreds of different tasks. Running a full branch diff for every PR request would be slow and expensive, and most of the time unnecessary. The context assembly tradeoff it makes is reasonable for a general tool.

The problem is that PR description quality is directly proportional to how much of the change the model can actually see. A diffstat tells you what changed. It doesn't tell you why, how the pieces fit together architecturally, what edge cases were handled, or what the test coverage actually covers. When you ask a model to write a PR from a diffstat, you're asking it to reconstruct the full picture from a thumbnail.

The fix is to separate context assembly from text generation. Stop letting your general-purpose AI coding assistant decide what context matters. Assemble the context yourself - or use a specialized tool to do it - and hand that to whatever model you want to use.

Concretely: a PR Brief for this feature branch was 380KB of structured XML. Feeding that into Claude web chat with Haiku 4.5 and a one-sentence prompt produced the second-ranked output in a three-way evaluation. The generation step cost effectively nothing. The quality came from the context.

This is what HiveTrail Mesh does for the context assembly step. It generates a PR Brief - structured XML containing file content, diffs, commit metadata, and checklist controls so you can include or exclude specific files and commits - from any local git repository. You take that output into any model you want: Claude web chat, Gemini, GPT-4o, or back into Claude Code via file reference. The generation step is your choice. The context assembly is handled.

Look at the commands your AI tool actually ran to generate your last pull request description. Most tools log this. If you wouldn't sit down and write a PR description manually from that output - if you'd want to open a few files, read through the diffs, check the test coverage - then you're asking the model to do something you wouldn't do yourself, with less information than you'd give yourself.

The model tier you're paying for doesn't change the quality of the raw material it's reasoning from. Context does.

If you're writing PRs for a project you care about, give the HiveTrail Mesh beta a look .

The difference wasn't the intelligence of the AI model, but the quality of the context it received. Claude Code running Sonnet 4.6 was only given a high-level git diff summary (a diffstat). In contrast, Haiku 4.5 was provided with a comprehensive 380KB XML file containing full file contents, unified diffs, and commit metadata. When an LLM has access to the actual primary source material, smaller models can easily outperform larger models that are forced to guess from summaries.

General-purpose AI coding assistants optimize for speed and cost across many different tasks, meaning they often rely on abbreviated summaries to save tokens. While this is fast, a basic diffstat leaves out crucial architectural context, edge cases, and test coverage details. To get high-quality AI PR descriptions, you must separate context assembly from text generation so the model can see the full scope of your code.

The most effective approach is to use a specialized "Just-in-Time" context engine like HiveTrail Mesh to handle the context assembly step. HiveTrail Mesh scans your local git repository to gather file content, diffs, and commit metadata, formatting it into a token-optimized structured XML document. You can then feed this complete PR Brief into any LLM of your choice to generate an accurate, senior-level description.

Yes. High-fidelity context often requires linking code changes back to the original product requirements. HiveTrail Mesh bridges this gap by synthesizing unstructured data from local files, Git histories, and Notion databases into a single context stack. Furthermore, with integrations to pull GitHub Issues and Pull Requests directly into the context assembler, you can ensure your AI model understands the exact bug or feature request driving the PR.

Not necessarily. While passing over 100,000 tokens typically increases API costs, using a smaller model like Claude Haiku 4.5, combined with prompt caching, absorbs most of the input cost. By providing a rich, pre-assembled context file, the Haiku generation step costs nothing effectively while producing a higher-quality result than an expensive model running on limited context.

Master the vocabulary of AI agents with this context engineering glossary. Discover 22 key terms, from context rot to attention budgets, to build better apps.

Read more about The Context Engineering Glossary: 22 Terms for AI Developers

Discover why git log --oneline starves your AI of context. Learn how feeding Claude full git diffs creates detailed, architecturally accurate pull request descriptions.

Read more about Why git log --oneline is Killing Your AI-Generated PRs

We had Gemini blind-judge three Claude-generated pull requests. Here is the exact AI pull request template it built, and why rich code context is essential.

Read more about We Had Gemini Blind-Judge Three Claude-Generated Pull Requests. Here's the Template It Built.