Ben •

Ben •

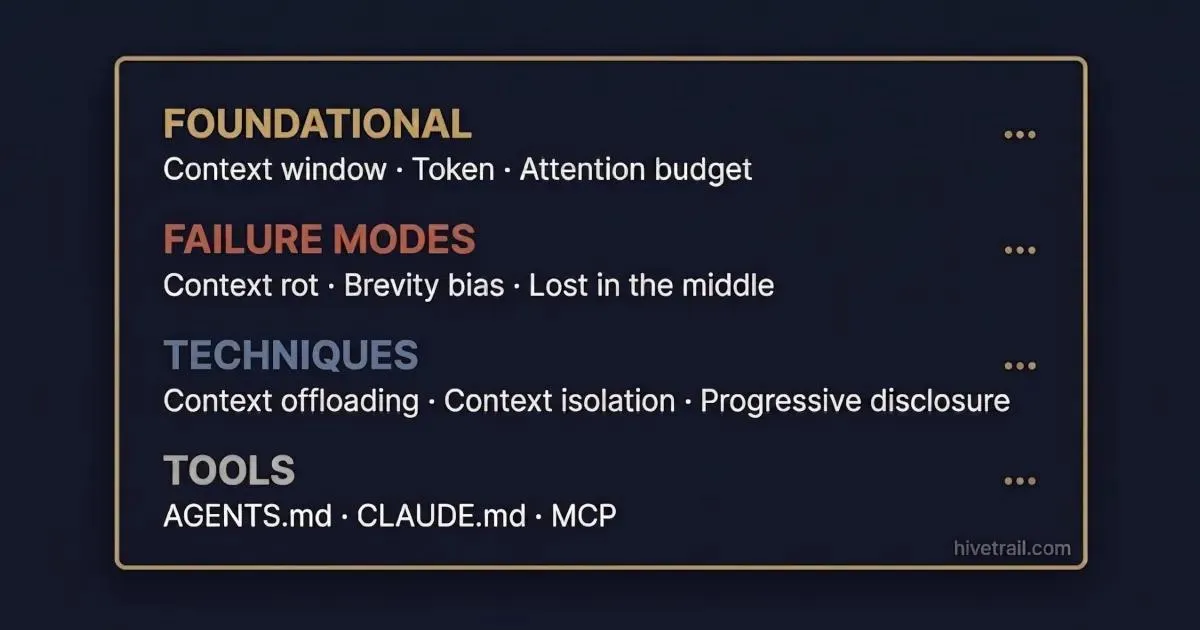

Context engineering has developed a precise vocabulary over the past 18 months. Some terms came from Anthropic's engineering team. Some from academic research. Some from the LangChain and Manus practitioner community. They often overlap, occasionally conflict, and are rarely defined in one place.

This context engineering glossary collects 22 of the most-used terms. Each definition is short, links to source material, and points to a deeper HiveTrail post for readers who want practitioner guidance.

This is a reference, not a read-through. Ctrl-F or jump to the category you need. The table of contents below links directly to each entry.

Four categories cover the field from bedrock to frontier: Foundational concepts (the vocabulary you need before everything else), Failure modes (the specific problems context engineering exists to solve), Techniques and patterns (the practical moves practitioners use), and Tools and standards (the specific artifacts that appear in real context engineering work). Each entry follows the same structure: one-sentence definition, 2–4 sentences of elaboration with origin attribution, a source link where one exists, and a link to deeper HiveTrail content where available.

The five terms below underpin every other entry in this glossary. Readers already fluent in tokens and context windows can skip ahead; for everyone else, these definitions establish the shared ground.

One-sentence definition: The discipline of deliberately shaping what information an AI model sees before and during a task, to produce reliable, high-quality output.

Elaboration: The term was popularized in 2025 as the successor concept to prompt engineering. Anthropic formalized it as the art and science of curating what will go into the limited context window from a constantly evolving universe of possible information. Where prompt engineering asks "how do I phrase this request?", context engineering asks "what does the model need to know to succeed?" The shift in framing moves the unit of analysis from a single instruction to the entire information environment the model inhabits.

Source: Anthropic's "Effective Context Engineering for AI Agents"

Deeper reading: Context Engineering vs. Prompt Engineering for Developers

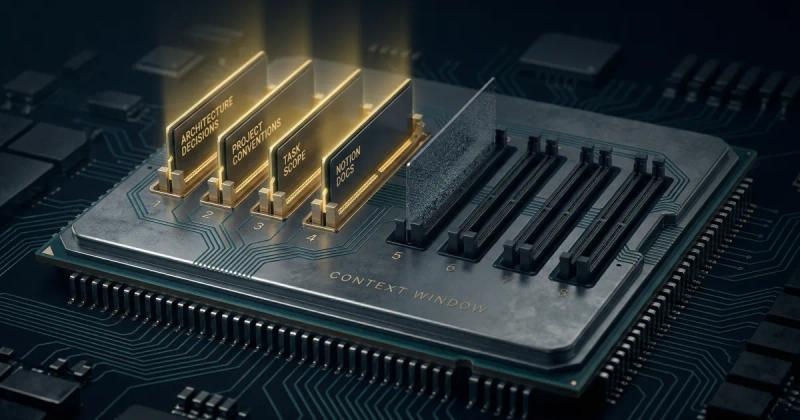

One-sentence definition: The total amount of text, measured in tokens, that a language model can process in a single interaction - including system instructions, conversation history, retrieved documents, and the model's own output.

Elaboration: Current frontier models advertise windows from 128K to 2M tokens, but the advertised window size is not the same as the effective window size. Research consistently shows that model performance degrades well before the hard limit is reached, and that the quality of what fills the window matters as much as the quantity. The context window is best understood as working memory for a single session - it does not persist between interactions and has no connection to training data.

Deeper reading: Why Your Claude Code Sessions Get Dumber Over Time (And How to Fix It)

One-sentence definition: The fundamental unit of text that language models process - roughly 3–4 characters of English, though tokenization varies by model and language.

Elaboration: A 5,000-token input is not 5,000 words; it represents approximately 3,500–4,000 words of plain English. Structured data, source code, and non-English text use tokens less efficiently than prose - a dense JSON object or a Python file with many symbols will consume more tokens per unit of meaning than an equivalent English description. Every token in a prompt counts against the context window and, for API usage, against cost. Token-awareness - knowing roughly how large any given input is before sending it - is a prerequisite for deliberate context engineering.

One-sentence definition: The metaphorical concept that an LLM has finite cognitive bandwidth, and every additional token in the context competes for the model's limited attention.

Elaboration: The term was introduced by Anthropic to explain why longer context does not reliably produce better output. As context grows, the model must distribute attention across more tokens, leading to diminishing returns and eventual performance degradation. The framing is deliberate: treating attention as a budget that can be spent well or wasted reframes context length from a technical constraint into a design decision.

Source: Anthropic's "Effective Context Engineering for AI Agents"

Deeper reading: Context Engineering for Developers

One-sentence definition: The approximate number of discrete instructions a model can reliably follow within a single context.

Elaboration: HumanLayer's analysis suggests that frontier thinking LLMs can follow approximately 150–200 instructions with reasonable consistency; smaller and non-thinking models follow fewer. The concept informs practical decisions about how much to include in files like CLAUDE.md or AGENTS.md, since every added rule competes with every other rule for the model's compliance. When an instruction budget is exceeded, models do not fail explicitly; they silently deprioritize rules in ways that are difficult to predict or debug.

Source: HumanLayer's "Writing a good CLAUDE.md"

Deeper reading: AGENTS.md vs CLAUDE.md: The AI Developer's Guide to Context Standards

The six terms below describe the specific ways that context mismanagement degrades LLM performance. Each has a distinct mechanism and, in most cases, a distinct mitigation.

One-sentence definition: The degradation in an LLM's ability to accurately recall and reason over information as the context window grows larger.

Elaboration: A 2025 Chroma study of 18 leading LLMs found that every model tested performed worse as input length grew - not gradually, but with sharp, unpredictable performance cliffs. A model might sustain near-full accuracy up to a certain input length, then drop sharply to 60% accuracy or below. The term has since become standard vocabulary across Anthropic, LangChain, and the broader practitioner community. Context rot is distinct from the "lost in the middle" phenomenon: rot is about total length; "lost in the middle" is about position within the context.

Source: Chroma's 2025 18-model study, via Anthropic's "Effective Context Engineering for AI Agents"

Deeper reading: Why Your Claude Code Sessions Get Dumber Over Time (And How to Fix It)

One-sentence definition: The tendency of automated prompt-optimization methods to favor short, generic instructions over longer, specific ones - dropping domain-relevant detail in the process.

Elaboration: The term was coined in the ACE (Agentic Context Engineering) paper to describe a failure mode of iterative prompt optimization. When an LLM is asked to compress or rewrite a complex system prompt, it systematically prefers concise summaries, often discarding the exact domain-specific constraints that made the original prompt work well. The ACE paper documented brevity bias as one of two primary failure modes of self-maintaining agent contexts, alongside context collapse.

Source: ACE paper, arXiv 2510.04618

Deeper reading: Agentic Context Engineering (ACE) Explained: How Evolving Playbooks Fix Context Collapse

One-sentence definition: The gradual erosion of information that occurs when an LLM repeatedly rewrites its own accumulated context - like overwriting a document so many times that key details disappear.

Elaboration: The second failure mode formalized by the ACE paper, alongside brevity bias. When agents self-maintain their context by iteratively summarizing and rewriting, specific details are lost each cycle. The cumulative effect over many rounds is a context that reads coherently to a human reviewer but has lost the nuance the agent needs to perform reliably on complex tasks. The ACE framework's interventions produced +10.6% improvement on agent benchmarks and +8.6% on finance benchmarks by addressing this failure mode directly.

Source: ACE paper, arXiv 2510.04618

Deeper reading: Agentic Context Engineering (ACE) Explained: How Evolving Playbooks Fix Context Collapse

One-sentence definition: The tendency of LLMs to attend more strongly to information at the beginning and end of long contexts while underweighting content positioned in the middle.

Elaboration: Stanford TACL research documented this pattern across major models and attributed it to architectural properties of transformer attention - a primacy and recency effect analogous to human working memory. The practical implication for context engineering is direct: placing critical instructions or documents in the middle of a long prompt is more likely to produce degraded output than placing them at the start or end. Context rot describes the total-length problem; lost in the middle describes the positional problem.

Source: Stanford TACL research on long-context evaluation

Deeper reading: How to Pass a Large Codebase to an LLM

One-sentence definition: The presence of too much irrelevant, redundant, or conflicting information in a context, degrading the signal-to-noise ratio available to the model.

Elaboration: Context pollution is distinct from context rot: rot is about degradation as a function of total length; pollution is about signal quality regardless of length. A 20K-token prompt containing the wrong 5K tokens of irrelevant content can produce worse output than a 40K-token prompt of well-selected material. The term was popularized by the Manus team to describe a common failure mode in agent systems that retrieve broadly rather than precisely.

Source: Manus context engineering posts via Phil Schmid's Context Engineering Part 2

One-sentence definition: The failure mode where an LLM cannot reliably distinguish between instructions, data, and structural markers in its context, or encounters logically incompatible directives.

Elaboration: Context confusion often manifests as a model following an earlier rule that contradicts a later one, or treating retrieved document text as an instruction to execute. It is closely related to prompt injection attacks, where adversarial content in retrieved documents attempts to override the model's system instructions. Structured prompting using XML tags, clear delimiters, and deliberate context ordering are the primary mitigations.

Source: Manus context engineering posts via Phil Schmid's Context Engineering Part 2

The seven terms below describe the practical moves of context engineering - the specific approaches practitioners use to manage what goes into a context, how it's organized, and how it's maintained over time.

One-sentence definition: Storing information outside the LLM's active context window - in a filesystem, vector store, or scratchpad - so the model can retrieve it on demand rather than carrying it in-band throughout a session.

Elaboration: Context offloading is one of the four core moves of context engineering identified in the Towards Data Science deep dive alongside retrieval, isolation, and reduction. The canonical implementation is Anthropic's "think" tool - a writable scratchpad that the model can reference without consuming the main context. Drew Breunig's parallel framing in "How to Fix Your Context" describes the same principle as externalizing memory.

Source: Towards Data Science deep dive on context engineering ; LangChain Deep Agents documentation

One-sentence definition: Dynamically fetching relevant information at inference time rather than front-loading all possibly-relevant content into the context at the start of a session.

Elaboration: Retrieval-Augmented Generation is the canonical implementation, but the broader principle - pull context when it is needed, not in advance - applies across patterns. Progressive disclosure in Claude's Skills system, just-in-time file reading in Claude Code, and on-demand document fetching in agent pipelines all apply the same core logic. The retrieval approach trades upfront context cost for query-time latency and the complexity of building a retrieval layer. The quality of retrieval determines the quality of the injected context: imprecise retrieval introduces context pollution (entry 10) even when the retrieval mechanism itself is functioning as designed.

One-sentence definition: Separating context across multiple agent instances or sub-threads so that one subtask's accumulated information does not contaminate another's.

Elaboration: Context isolation is the mechanism behind Claude Code's subagent architecture and multi-agent systems more broadly. Each subagent operates within its own bounded context window; only a final summary returns to the main conversation. Drew Breunig's framing refers to this pattern as "context quarantine" - containing the blast radius of a context that has grown noisy, conflicted, or polluted, rather than trying to clean it in place.

Source: Anthropic's subagents documentation; "How to Fix Your Context" (Drew Breunig)

Deeper reading: Claude Code Token Usage: Cut Context Costs in Half

One-sentence definition: Compressing accumulated conversation history into a smaller summarized form, preserving essential information while reducing token count.

Elaboration: Claude Code's /compact command is the most widely cited implementation. The operational challenge is not the summarization itself but deciding what survives compaction: effective reduction preserves facts that constrain future actions (what has already failed, what files exist, what assumptions have been invalidated) and discards conversational scaffolding. Poor compaction can introduce context collapse by systematically dropping the details that constrained the agent's prior decisions.

Deeper reading: Claude Code Token Usage: Cut Context Costs in Half

One-sentence definition: Structuring context so the model receives only the information it needs for the current step, with mechanisms to load deeper content on demand.

Elaboration: The pattern underlies Claude's Skills system, where metadata is loaded at startup and full skill content is fetched only when a skill is invoked. The same principle applies to AGENTS.md and CLAUDE.md best practices: keep the root file minimal and reference deeper documents that the model reads when needed, rather than loading everything at session start. Progressive disclosure reduces baseline context cost without sacrificing coverage.

Deeper reading: AGENTS.md vs CLAUDE.md: The AI Developer's Guide to Context Standards

One-sentence definition: An agentic pattern where an agent branches into a sub-trajectory to handle a subtask, then folds the intermediate steps away, retaining only a summary in the main context.

Elaboration: Proposed in a 2025 paper from ByteDance Seed, CMU, and Stanford. Context folding differs from standard subagent delegation in that the folding and unfolding is managed dynamically by the agent during execution, not pre-structured by the orchestration harness. In benchmark evaluation, the Folding Agent achieved 62% on BrowseComp-Plus using 32K tokens, performance that baseline approaches required 327K tokens to match.

Source: Context-Folding paper (ByteDance Seed, CMU, Stanford, 2025)

One-sentence definition: The assembled bundle of context - drawn from multiple sources - that is sent to an LLM for a given task.

Elaboration: The term is used across the practitioner community to describe the composed, task-scoped context (system prompt, relevant documents, conversation history, tool definitions, and any injected data) as a unified artifact, distinct from any single input component. Mesh is an example of a dedicated context-assembly tool that makes the stack a first-class artifact - one that can be saved, versioned, and reused across sessions rather than rebuilt from scratch each time.

The four entries below describe specific artifacts and protocols that appear directly in context engineering work: the files developers write, the formats they use, and the protocols that govern how agents connect to external systems.

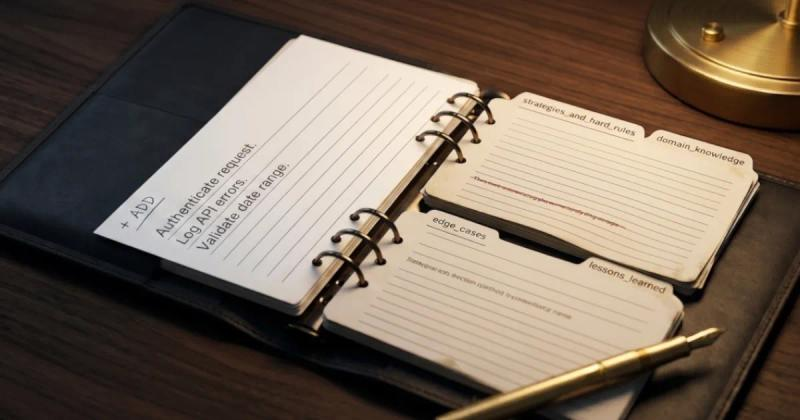

One-sentence definition: An open, Markdown-based standard for providing persistent instructions to AI coding agents, placed at the root of a project repository.

Elaboration: AGENTS.md emerged in 2025 from collaboration between Sourcegraph, OpenAI, Google, Cursor, and Factory, and is now governed by the Agentic AI Foundation under the Linux Foundation. The format is designed to be tool-agnostic: a single AGENTS.md file at the repository root provides instructions to any compliant agent. One notable exception as of April 2026 is Claude Code, which uses its own CLAUDE.md format rather than AGENTS.md natively.

Source: agents.md

Deeper reading: AGENTS.md vs CLAUDE.md: The AI Developer's Guide to Context Standards

One-sentence definition: Anthropic's Markdown format for providing project-specific instructions to Claude Code, loaded automatically at session start from designated locations in the file hierarchy.

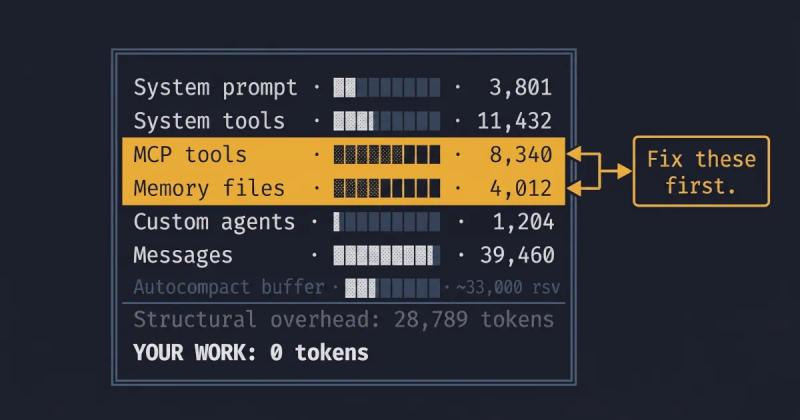

Elaboration: CLAUDE.md predates the AGENTS.md standard and includes features not present in AGENTS.md: @import directives for progressive disclosure, path-scoped rules with frontmatter, and a hierarchical memory model spanning user, project, and local scopes. The tradeoff is portability - CLAUDE.md is Claude Code-specific and does not transfer to Cursor, GitHub Copilot, Codex, or other agent environments. Keeping CLAUDE.md files within the instruction budget (see entry 5) is a recurring practitioner concern.

Source: Claude Code documentation

Deeper reading: AGENTS.md vs CLAUDE.md: The AI Developer's Guide to Context Standards

One-sentence definition: An open protocol, originally developed by Anthropic and now governed by the Linux Foundation, that standardizes how AI agents connect to external tools and data sources.

Elaboration: MCP has been adopted across Anthropic, OpenAI, Google, and Microsoft. It defines how tool definitions, tool calls, and tool results flow between LLMs and external systems. Concrete implementations are called MCP servers - one server per integration (Linear MCP, Notion MCP, GitHub MCP, and so on). Each connected MCP server contributes its tool definitions to the active context window; in systems with many connected servers, MCP tool definitions can become a meaningful fraction of the available context budget. The protocol's standardization means that a single MCP server implementation works with any compliant LLM host, reducing the integration burden for toolmakers.

Source: modelcontextprotocol.io

One-sentence definition: The initial set of instructions provided to an LLM that defines its role, behavior, constraints, and output format before any user interaction begins.

Elaboration: The system prompt is typically set by the developer or product builder, not the end user, and is often not visible to the end user in deployed applications. In agent systems, the system prompt is where role definitions, tool usage rules, memory instructions, and safety guardrails live. A well-written system prompt is precise, internally consistent, and sized to remain within the model's instruction budget. Instructions that conflict or exceed the budget are not surfaced explicitly as errors, and are silently degraded. The system prompt is also the highest-priority position in the context window for positional attention: instructions placed here are less susceptible to "lost in the middle" degradation than instructions buried in retrieved documents.

The author is the founder of HiveTrail , building context management tools for LLMs and agentic AI. HiveTrail's flagship product, Mesh , is a desktop app (currently in beta) that assembles just-in-time context for LLMs from Notion, local files, and prompt libraries, with built-in privacy scanning and token management.

On this page

Context engineering is the discipline of deliberately shaping what information an AI model sees before and during a task, to produce reliable, high-quality output. Popularized in 2025 as the successor concept to prompt engineering, the term encompasses techniques for assembling, curating, compressing, and maintaining context across sessions and agent interactions.

Prompt engineering focuses on how to phrase a single request to an LLM. Context engineering focuses on what information the model needs to see to succeed, including the system prompt, retrieved documents, conversation history, tool definitions, and any external context. Prompt engineering is best understood as a subset of context engineering.

Context rot is the degradation in an LLM's ability to accurately recall and reason over information as the context window grows. Research from Chroma testing 18 leading LLMs found that every model exhibited this pattern, often with sharp performance cliffs at unpredictable input lengths. The phenomenon is why simply using larger context windows does not reliably produce better output.

Agentic AI systems depend on managing context across dozens or hundreds of steps. The vocabulary: context rot, brevity bias, progressive disclosure, context folding, describes specific failure modes and mitigation patterns that practitioners encounter daily. Shared vocabulary lets teams diagnose problems precisely and apply known fixes, rather than rediscovering each issue from scratch.

Stop rewriting prompts. Learn when to use context engineering vs prompt engineering to optimize LLM context quality without complex RAG pipelines.

Read more about Context Engineering vs Prompt Engineering: What the Shift Means for Developers (2026)

A plain-English guide to Agentic Context Engineering (ACE). Learn how this evolving playbook framework prevents context collapse in self-improving AI agents.

Read more about Agentic Context Engineering (ACE) Explained: How Evolving Playbooks Fix Context Collapse

Reduce Claude Code token usage with 9 high-leverage tactics. Learn how to prune CLAUDE.md, optimize the autocompact buffer, and cut context costs in half.

Read more about Claude Code Token Usage: Cut Context Costs in Half