Ben •

Ben •

There's a workflow that almost every Notion user knows too well.

You've done the hard work. Your PRD is beautifully structured. Your project board is up to date. Your architectural notes are nested and linked exactly the way you want them. Then you open Claude or ChatGPT, and the first thing you do is... start highlighting and pasting.

Tables turn into flat text. Relational context evaporates. You spend five minutes reformatting things so the AI doesn't misread your data structure, then another five minutes prompting your way back to the point you were trying to make.

It's a small friction - annoying but manageable, or so it seems. The problem is that it happens constantly, and it compounds. This piece breaks down why the problem is more structural than it appears, and what a better workflow actually looks like.

The obvious counter here is: just use Notion AI. And for a lot of tasks, that's genuinely good advice.

If you want to summarize a page, rewrite a paragraph, or generate a quick draft without leaving your workspace, Notion AI is fast and well-integrated. It's hard to beat for in-context, single-source tasks.

But the moment your work gets more complex, three limitations start to show up.

You can't combine sources. Notion AI only knows what lives in Notion. If you're a developer who needs to reason across a PRD *and* a local codebase, you're stuck. The AI that understands your spec can't see your files, and the AI that can see your files doesn't know your spec.

You don't control what it reads. Notion AI uses retrieval-augmented generation under the hood, which means it decides which parts of your documents are relevant before generating a response. For exploratory questions, this is fine. For precision work - generating accurate code, producing structured tickets, writing targeted copy - you often want to know exactly what context the model is working from, not hope that the retrieval picked the right pieces.

You're paying for a seat-level add-on. Meanwhile, Claude, ChatGPT, and Gemini all offer capable free tiers. For teams doing heavy AI work, the cost math on external models frequently wins - but only if you can get your Notion context there without the friction eating into the gains.

Once you accept that external LLMs are part of the workflow, the copy-paste approach looks less like a workaround and more like a liability. Here's where it tends to fail.

Formatting. Modern LLMs parse structured Markdown and XML well. They parse unstructured rich-text dumps poorly. When Notion's table relationships and nested hierarchies collapse into a wall of text, you're forcing the model to reconstruct structure it shouldn't have to guess at. The outputs suffer for it.

Context bloat. Large context windows are genuinely useful, but they're not free. Pasting an entire workspace into a chat inflates token costs, slows generation, and can cause what some researchers call context dilution - where instructions buried deep in a long prompt get underweighted or effectively ignored. Precise context outperforms large context almost every time.

Accidental data exposure. This one is low-frequency but high-consequence. When you're rapidly copying from internal docs, it's easy to clip an API key, a customer email, or an internal server path and paste it straight into a public UI. Enterprise teams in particular have stopped AI adoption programs over exactly this risk.

A proper solution to this problem has to do a few things that a generic automation tool (a Zapier webhook, a make.com scenario, a manual script) won't give you out of the box.

It needs to pull Notion data through the API rather than the clipboard, so formatting survives the transfer. It needs to handle Notion's raw data format - translating user IDs into names, resolving relational properties, and converting database structure into something an LLM can actually parse. It needs to let you combine sources: Notion content alongside local files, alongside reusable prompt components, assembled into a single coherent payload. And it needs to catch sensitive data before it leaves your machine.

That last point matters more than most workflow tools acknowledge. Masking data isn't enough if you mask it inconsistently - if every instance of your internal server name becomes [REDACTED], the AI can't write code that references different servers coherently. Good redaction preserves logical relationships while hiding specifics: Server_A stays Server_A throughout the session, Database_B stays Database_B.

Finally, it should let you see token counts before you send. Knowing that your assembled context uses 40,000 of your model's 100,000-token window is genuinely useful information. Not knowing means you're guessing.

HiveTrail Mesh is built specifically for this workflow. It's a standalone, cross-platform tool that acts as a staging layer between Notion (or local files) and whatever LLM you're working with.

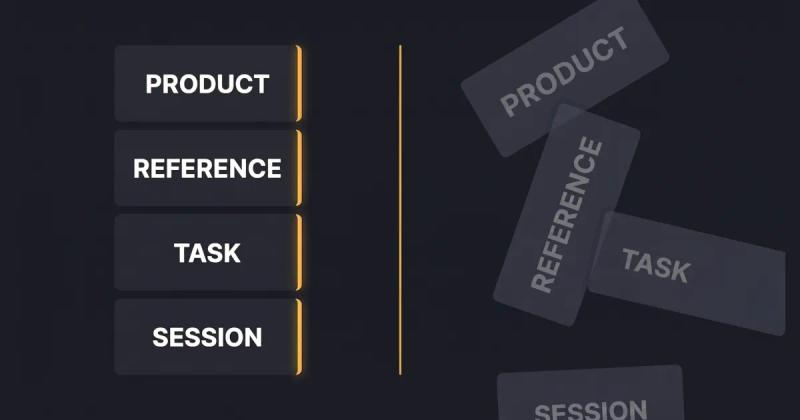

The core interaction is what they call The Stack - a workspace where you assemble context from multiple sources before exporting. Pull in a filtered Notion database (by date, status, or assignee), add a folder of local Python files using glob patterns, attach a saved system prompt from your library, and HiveTrail merges them into a structured, token-optimized payload ready to paste into any chat interface or API call.

The Notion integration handles the translation work automatically: raw user IDs become real names, relational properties get traversed, and Jinja2 templating converts Notion's property structure into clean Markdown or XML. You define the format once; HiveTrail applies it consistently every time.

On the privacy side, an automated Exit Gate intercepts your content before it reaches the clipboard. If it detects emails, API keys, IP addresses, or internal paths, it halts the export and opens a full-screen Privacy Auditor where you can review and act on each finding. The consistent token replacement - where Internal_Server_X maps to Server_1 across the entire session - means you can work safely with proprietary infrastructure details without corrupting the AI's ability to reason about them.

Before exporting, you can select your target model and see exactly how much of its context window your stack uses. No mid-generation cut-offs, no guessing.

If you're a developer bridging specs and code, a PM generating structured tickets, a founder working across scattered data sources, or anyone who's noticed the copy-paste overhead adding up, the workflow HiveTrail describes is the right direction, regardless of which tool you use to get there.

The friction between organized knowledge and AI reasoning is real, and solving it with a purpose-built tool rather than duct-taped automation is often worth it.

HiveTrail Mesh is available as a standalone download. If the workflow resonates, it's worth a look.

Every time you paste code into ChatGPT, you might be leaking API keys, PII, and trade secrets. Here's what the data shows, and how to stop it before it happens.

Read more about The Hidden Risk of Pasting Code into LLMs: How to Prevent API Key and PII Leaks

Stop generating shallow AI outputs. Learn context engineering for product managers to build reusable data stacks and improve PRDs without tweaking prompts.

Read more about Context Engineering for Product Managers: The Missing AI Skill

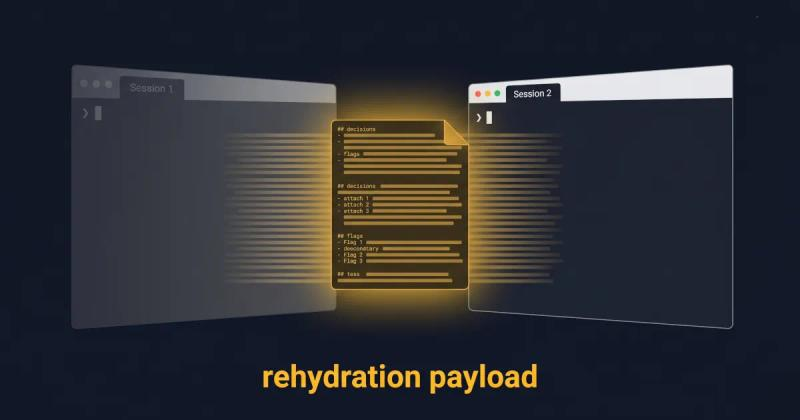

Long-running AI agents lose context over time. The fix isn't bigger context windows. It's a curated handoff between coding sessions. Here's the protocol.

Read more about Why Long-Running AI Agents Forget: A Session Context Strategy