Ben •

Ben •

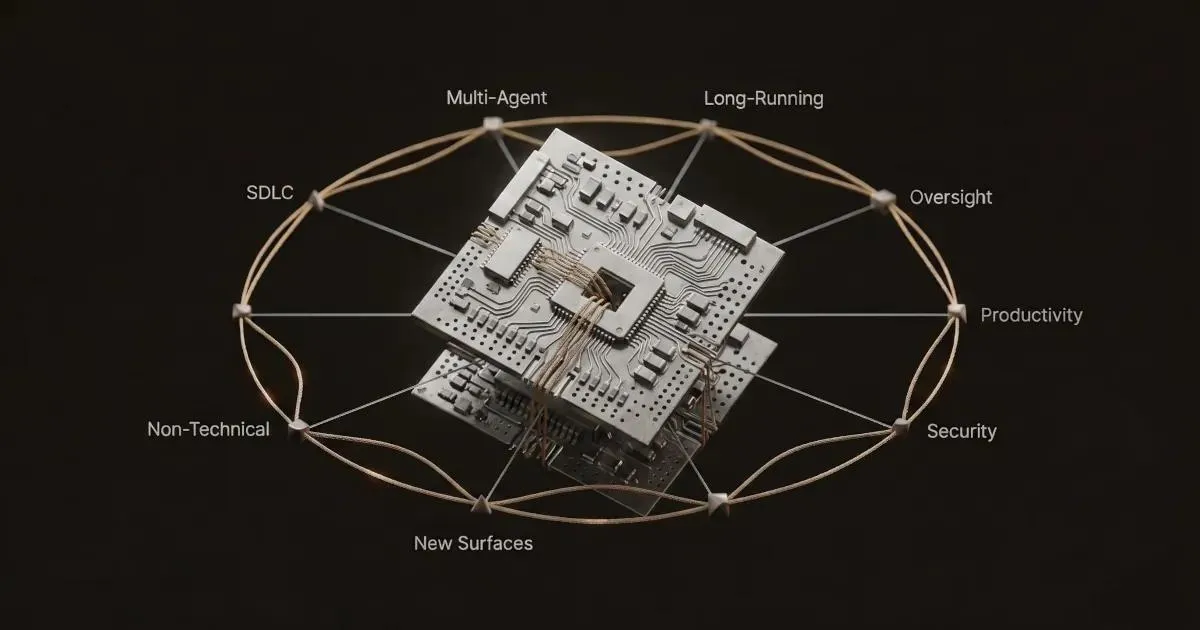

Anthropic's 2026 Agentic Coding Trends Report describes a year in which software engineering becomes the work of orchestrating agents rather than writing code - and every one of the eight trends it identifies increases the pressure on how teams manage context. The report is a forecast document, not a research paper. It identifies eight predicted trends organized into three categories - foundation, capability, and impact - and supports each with case studies drawn primarily from Anthropic's own customers. The headline finding, the one worth anchoring to, is this: the report is implicitly an argument that context engineering is the load-bearing skill of 2026. Anthropic doesn't say that directly. But read the eight trends in sequence and the pattern becomes hard to miss. Here's what they actually say - and how to think about what they mean for your team.

Anthropic's 2026 Agentic Coding Trends Report is a forecast and framing document published by Anthropic in early 2026. It identifies eight trends they predict will define agentic AI coding this year, organized into three categories: foundation trends (structural changes to how development work happens), capability trends (what agents can now do that they couldn't before), and impact trends (what the business outcomes actually look like). It draws on case studies from Rakuten, CRED, TELUS, Zapier, Legora, Fountain, and Augment Code.

One thing worth being upfront about: Anthropic wrote this report, and nearly every case study in it features Claude or Claude Code. That doesn't invalidate the trends - multi-agent workflows, longer task horizons, and non-technical users building tools are broadly observable across the industry, not Claude-specific phenomena. But "Anthropic predicts" and "it is true" are different claims, and the post you're reading will treat them that way throughout.

This is the reference section. If you haven't read the PDF, this is the signal. The full 17-page report is worth your time if you have it - the case studies add texture that no summary can fully replace.

Trend 1: The software development lifecycle changes dramatically. Anthropic predicts that the traditional SDLC stages don't disappear, but cycle times collapse - from weeks to hours - as agents absorb the implementation layer. The engineer's job shifts from writing code to coordinating agents that write code: setting architecture, providing direction, evaluating quality, and stepping in where judgment is required. Onboarding to an unfamiliar codebase compresses similarly. The clearest case study: Augment Code reports that an enterprise customer finished a project originally scoped at 4–8 months in under two weeks.

Trend 2: Single agents evolve into coordinated teams. Multi-agent architectures - where an orchestrator model delegates work to specialized sub-agents - become the standard pattern for complex tasks rather than the exception. Anthropic's view is that this shift makes the coordination layer, rather than the individual agent, the primary focus of engineering attention. Fountain illustrates the output: their hierarchical multi-agent system achieved 50% faster candidate screening, 40% quicker onboarding, 2x candidate conversions, and helped one logistics customer reduce a process that used to take over a week to under 72 hours.

Trend 3: Long-running agents build complete systems. Task horizons expand dramatically - from sessions measured in minutes to work measured in hours or days. The Rakuten example anchors this trend: Claude Code autonomously completed a complex implementation in the vLLM codebase (12.5 million lines of code) over seven hours, achieving 99.9% numerical accuracy throughout. The report frames this not as a curiosity but as a preview of how deep, sustained technical work gets done.

Trend 4: Human oversight scales through intelligent collaboration. This is the trend with the most nuance, and the one that gets summarized least accurately in the coverage I've seen. Anthropic's internal research found that engineers report using AI in roughly 60% of their work - but describe being able to "fully delegate" only 0–20% of tasks. The report frames this not as a failure but as evidence that effective AI collaboration is participatory: setup, prompting, supervision, validation, and judgment aren't friction - they're the job. CRED doubled their execution speed not by removing humans from the loop, but by redirecting developers toward higher-value decisions.

Trend 5: Agentic coding expands to new surfaces and users. Anthropic forecasts expansion in two directions simultaneously: backward, toward legacy codebases in languages like COBOL and Fortran that have been historically resistant to AI tooling; and outward, toward users who've never thought of themselves as developers. The boundary between "people who write code" and "people who work with software" begins to dissolve. Legora is cited as the case study for domain expansion.

Trend 6: Productivity gains reshape software development economics. The productivity story the report tells is subtler than "engineers work faster." Output volume grows more than time-per-task shrinks - engineers aren't doing the same work more quickly, they're doing substantially more work. Roughly 27% of AI-assisted work, the report notes, is work that wouldn't have been attempted at all without AI. TELUS quantifies this at scale: 13,000+ custom AI solutions built, 30% faster engineering code shipment, over 500,000 hours saved, with an average interaction time of 40 minutes.

Trend 7: Non-technical use cases expand across organizations. Sales, legal, operations, and marketing teams start building their own tools - not as a pilot program but as standard practice. Zapier reports 89% AI adoption across the organization, with 800+ internal AI agents running. Anthropic's own legal team reportedly cut marketing review turnaround from 2–3 days to 24 hours - [FLAG: verify this internal Anthropic example appears in the PDF, not in ancillary materials]. The trend the report is naming is that the skill of working with AI agents stops being exclusive to engineering teams.

Trend 8: Dual-use risk requires security-first architecture. The same agent capabilities that help defenders - fast scanning, broad code analysis, pattern recognition - also help attackers. Anthropic's position is that security can't be retrofitted; it has to be the starting assumption. This trend is less about any single case study and more about a design philosophy: every new capability you give an agent is also a potential attack surface.

That's the complete forecast, compressed. What follows is my read of what it actually means.

Read those eight trends individually, and they're interesting forecasts. Read them together, and a pattern surfaces that the report never quite names directly.

Every one of those trends bottlenecks on context.

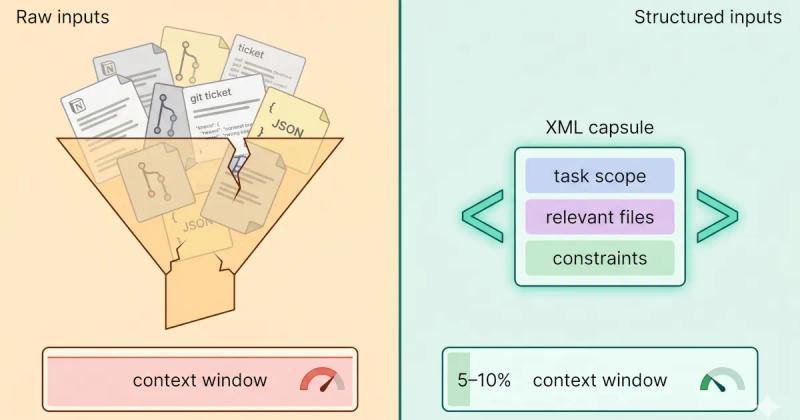

The report mentions context engineering once, obliquely - in the acknowledgment that engineers can only fully delegate 0–20% of tasks despite using AI in 60% of their work. The gap isn't capability. Current models are capable enough to handle far more than 20% autonomously. The gap is that fully delegating a task requires the agent to have the right context to complete it: knowledge of the codebase, the constraints, the stakeholders, the history, and the failure modes. When that context isn't there - or when it degrades across a long session - humans step back in.

Here's the context-management implication buried in each trend:

| Trend | Context-management implication |

|---|---|

| SDLC compression | Onboarding collapses from weeks to hours only if the agent gets the right context fast - otherwise the speed gain evaporates in back-and-forth clarification |

| Multi-agent coordination | Every sub-agent needs its own scoped, relevant context; the orchestrator's job is largely a context-routing problem |

| Long-running agents | Days-long sessions amplify context rot - compaction and summarization strategies stop being nice-to-have and become load-bearing |

| Intelligent human oversight | An agent knowing when to ask for help is itself a context problem - it needs enough situational awareness to recognize the edges of its own knowledge |

| Expansion to new surfaces | Non-traditional developers don't have the engineering instincts to curate context well by default; the tool has to handle it, or the output quality collapses |

| Productivity through volume | 27% of AI-assisted work is net-new work - all of it needs context assembled from scratch, not retrieved from memory |

| Non-technical users | A legal team building their own tools without engineering support is doing context engineering whether they'd call it that or not |

| Dual-use security | Every additional bit of context fed to an agent is an expanded attack surface; privacy scanning and exit-gate controls stop being optional |

The synthesis is simple enough to state plainly: the report is a forecast of what agents will be able to do. What it doesn't say explicitly is that every one of those capabilities bottlenecks on how well humans - or other agents - can assemble, curate, and pass context to the agents doing the work.

That's the discipline Anthropic's own engineering team has been writing about in parallel. Their Effective Context Engineering for AI Agents piece frames context as a finite resource with an "attention budget" - scarce, directional, and deserving of the same engineering discipline you'd apply to any load-bearing system component. The 2026 Trends Report is, in effect, a forecast that demand for that discipline is about to increase across every one of these eight dimensions simultaneously.

The trends translate into concrete changes - but they land differently depending on where you sit.

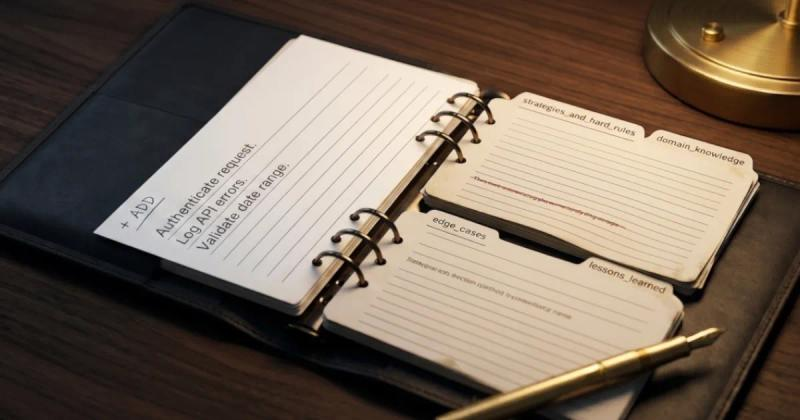

Onboarding collapses from weeks to hours only if your agent can be handed the right context fast. Audit what it actually takes for a new Claude Code session to understand your codebase and produce useful output. If the answer is "read CLAUDE.md and infer the rest," the speed gain you're counting on will be capped. The bottleneck moved; it didn't disappear.

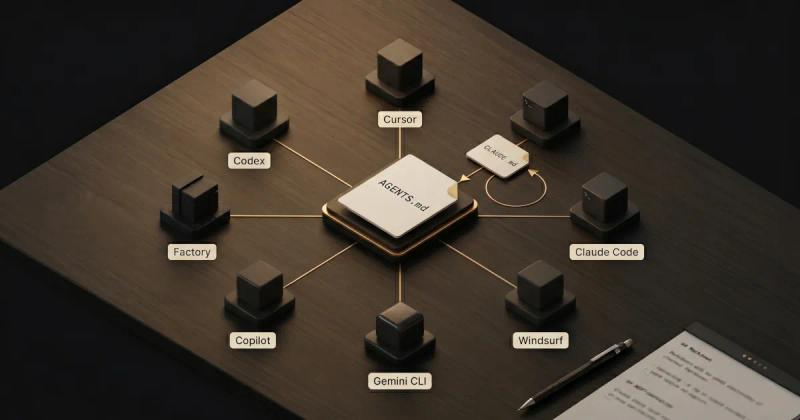

Multi-agent architectures are coming for what's currently handled in a single long session. The right question isn't "how do we use more agents?" - it's "what are the context boundaries between agents, and who owns them?" That's an infrastructure concern, not a prompt quality concern. The context stack playbook approach is worth building before you need it.

The "0–20% fully delegated" finding is a ceiling on naive delegation. Teams that raise it won't do so by switching models. They'll do it by building better context pipelines.

Your CLAUDE.md or AGENTS.md file is doing more work than you think. Treat it as a first-class artifact - version-controlled, intentionally curated - not a scratchpad you update when things break.

The skill that compounds is knowing what to leave out of a prompt. Long, sprawling context isn't the same as good context. The engineers who develop that editorial instinct will outperform the ones relying on model capacity to compensate.

If you're running long Claude Code sessions, check your context usage regularly. The failure modes at 80% full are different from the failure modes at 40%, and most of the context rot happens before you notice it.

The report predicts you'll be building your own tools in 2026. Trend 7 isn't about engineers adopting AI faster - it's about sales, legal, ops, and marketing teams standing up their own workflows without engineering support. The bottleneck those teams will hit isn't technical skill. It's the ability to describe, save, and hand off context in ways that make the tool work reliably across sessions.

Start saving and reusing context now. If you've pasted the same project brief into ChatGPT four times this week, you're not building a workflow - you're rebuilding one, from scratch, each time.

If you're in that group - PMs, legal, ops, marketing people who want to build their own AI workflows - the bottleneck the report predicts is exactly the problem we're building Mesh to solve. Save your context once, reuse it across every session, and scan it for secrets before it leaves your machine.

I run a company that builds context management tooling - so I have a stake in how this story gets told, and you should weigh my analysis accordingly.

Reading the Anthropic report was less "this changes everything" and more "a lot of what we've been betting on just got formally named by the most important voice in the room." That's validating, but it also raises the bar. The eight trends don't just increase demand for context management - they diversify it. Multi-agent coordination needs context routing. Long-running agents need compaction and summarization. Non-technical users need context tooling that doesn't require engineering instincts to operate.

Mesh is the human-scale, in-the-loop version of this problem - designed for the 60% of AI work that still requires your judgment, not the 20% you've fully delegated. It's not the answer to every trend in the report. Multi-agent orchestration and autonomous security response need different tools. But every one of those tools composes better when the human context layer underneath - the project knowledge, the team preferences, the institutional context - is curated and ready rather than reconstructed from scratch each session.

If what I've described resonates - if you're tired of rebuilding the same context stack for every session and want a tool that treats context like the engineered artifact it is - Mesh is in limited beta, free during the beta period. Request early access →

The report closes with four priorities for the year ahead. These are Anthropic's recommendations, not mine - I'm paraphrasing, not endorsing or embellishing.

1. Master multi-agent coordination. Orchestrator patterns and hierarchical agent teams become the standard architecture for anything non-trivial. Teams that don't develop coordination skills will hit a ceiling on what they can automate.

2. Scale human-agent oversight with AI-automated review. The collaboration paradox isn't solved by removing humans - it's solved by making human participation more efficient. AI-automated review layers are how teams maintain oversight without scaling headcount proportionally.

3. Extend agentic coding beyond engineering teams. The productivity gains available to sales, legal, operations, and marketing through agentic tooling are comparable to those available to engineering - but most organizations are only beginning to create the infrastructure for non-technical teams to access them.

4. Embed security architecture from the start. Not as a compliance checkbox, but as a structural assumption. Every agentic capability added to a system is also a potential attack surface. The security architecture has to be coextensive with the capability architecture.

5. Treat context as a first-class engineered artifact. This one isn't in the report - it's the priority I'd add. Version it. Curate it. Reuse it across sessions and agents. Scan it for sensitive data before it leaves your machine. Every other priority on this list gets meaningfully easier if this one is solved - because every other priority depends on agents getting good context. The multi-agent coordination problem is partly a context routing problem. The human oversight scaling problem is partly a context handoff problem. The non-technical user expansion problem is partly a context curation problem.

The practical guide to building that foundation - what to include, how to structure it, how to think about context as a deliberate engineering input rather than prompt filler - is in our context engineering for developers guide. That's the right place to go after reading this.

For the architectural layer - how to design context flows across multi-agent systems - is in our Agentic Context Engineering (ACE) Explained guide.

The author is the founder of HiveTrail , where he's building Mesh - a context management tool for LLMs and agentic AI. Mesh assembles just-in-time context from Notion, local files, and prompt libraries, with built-in privacy scanning and token management. It's currently in free beta. Request access →

On this page

A forecast document published by Anthropic that identifies eight trends they predict will shape agentic AI coding in 2026, organized into foundation trends (how development work changes), capability trends (what agents can do), and impact trends (business outcomes). It includes case studies from Rakuten, CRED, TELUS, Zapier, Legora, Fountain, and Augment Code.

The SDLC collapsing cycle times from weeks to hours; single agents evolving into coordinated multi-agent teams; long-running agents building complete systems over days; human oversight scaling through intelligent collaboration; agentic coding expanding to new surfaces and non-traditional users; productivity gains reshaping software development economics; non-technical use cases expanding across organizations; and dual-use security risk requiring security-first architecture from the start.

The report argues that in 2026, the core work of software engineering shifts from writing code directly to coordinating AI agents that write code. Engineers focus on architecture, system design, agent coordination, strategic direction, and quality evaluation rather than tactical implementation. The Augment Code case study, a 4–8 month project completed in two weeks, is the sharpest illustration of what this looks like in practice.

Anthropic's internal research found that engineers use AI in roughly 60% of their work but report being able to "fully delegate" only 0-20% of tasks. The gap is explained by effective AI collaboration requiring active human participation - setup, prompting, supervision, validation, and judgment, particularly for high-stakes or context-dependent work. The implication is that productivity gains come from making that participation more efficient, not from trying to eliminate it.

Anthropic recommends four priorities: master multi-agent coordination, scale human-agent oversight with AI-automated review, extend agentic coding beyond engineering teams, and embed security architecture from the start. A fifth priority worth adding is treating context, what gets fed to agents before and during sessions, as a first-class engineered artifact. Version it, curate it, reuse it, and scan it for sensitive data. Every other item on this list gets easier if this one is handled well.

Claude Code sessions degrade silently - not from bugs, but from context rot. Here's the science, the symptoms to spot early, and the fix that works upstream.

Read more about Claude Code Context Window Rot: Why Sessions Get Dumber (And How to Fix It)

A plain-English guide to Agentic Context Engineering (ACE). Learn how this evolving playbook framework prevents context collapse in self-improving AI agents.

Read more about Agentic Context Engineering (ACE) Explained: How Evolving Playbooks Fix Context Collapse

AGENTS.md is the emerging open standard for AI coding agents. Here's how it compares to CLAUDE.md, when to use each, and how to run both without drift.

Read more about AGENTS.md vs CLAUDE.md: The AI Developer's Guide to Context Standards