Ben •

Ben •

A team from Stanford, SambaNova, and UC Berkeley recently published the ACE paper - and it's the most substantive academic contribution to context engineering I've seen in a while. The core idea: give your AI agent a structured "playbook" that it maintains and refines itself, task by task. The result? A +10.6% performance improvement on agent benchmarks, and +8.6% on domain-specific finance reasoning, using a smaller open-source model that matched top-ranked production agents.

ACE works through three components - a Generator, a Reflector, and a Curator - that mirror how humans actually learn: attempt, reflect, consolidate. The Curator's key trick is issuing small, surgical edits to the playbook rather than rewriting it wholesale. That single design choice is what prevents the context degradation most agents suffer silently.

Here's the honest caveat: you probably shouldn't run out and implement this today. But you should understand what it proves, because the principles transfer directly to how you work with AI agents right now.

If you've spent time building or using AI agents, you've encountered two failure modes that nobody talks about clearly enough.

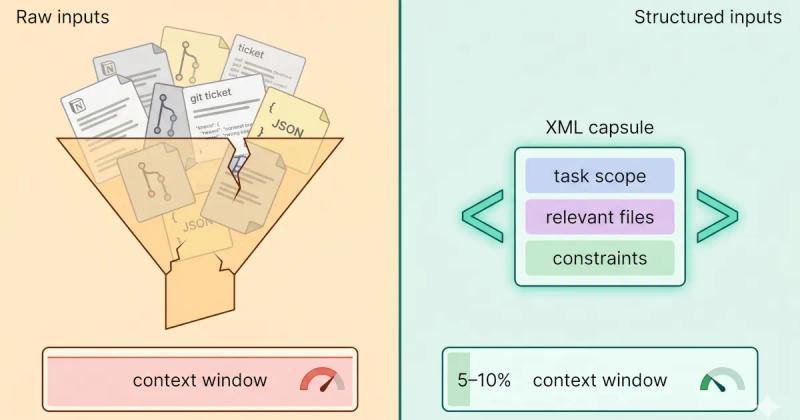

The first is context rot - the gradual degradation in output quality as an agent's context window fills up with irrelevant history, redundant instructions, and stale reasoning. This is the "why does this agent get worse over time" problem that most practitioners diagnose too late.

The second failure mode is structural: your agent runs the same class of task five hundred times and never gets better at it. The system prompt you wrote in week one is the same system prompt running in week twenty. Every lesson learned in production, every hard-won heuristic, every edge case your team discovered - none of it feeds back into the agent's context. It starts from scratch every time.

The ACE paper names two specific mechanisms that cause this, and both are worth adding to your vocabulary.

Brevity bias is what happens when you try to automate prompt optimization. Methods like DSPy and GEPA can evolve your prompts automatically - but they tend to favor short, compact instructions over rich, specific ones. Domain knowledge gets compressed out in favor of instructions that benchmark well on average. You end up with a leaner prompt that performs worse on the nuanced cases your domain actually cares about.

Context collapse is what happens when you try to solve the stale-context problem by asking the model to iteratively rewrite its accumulated context. Each rewrite seems clean. But over many rounds, specific details get gradually squeezed out. The researchers described it through VentureBeat's coverage as "like overwriting a document so many times that key notes disappear" - a kind of digital amnesia baked into the optimization loop itself.

Both problems share the same root cause: treating context as something to be compressed or replaced, rather than something to be curated.

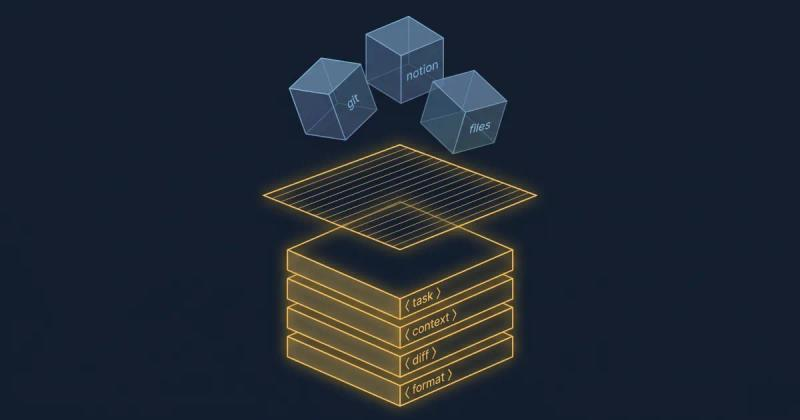

ACE treats an agent's context as a structured, evolving playbook - a living document of strategies, domain rules, and hard-won lessons that the agent itself maintains, one task at a time.

Rather than one model trying to act, reflect, and update its own context simultaneously (which creates the compression pressure that leads to context collapse), ACE splits the work across three distinct roles:

The design mirrors how experienced engineers actually learn: you ship something, do a postmortem, and update your runbook. You don't throw the runbook away and rewrite it from memory after every incident.

The single most important engineering decision in ACE is how the Curator writes to the playbook. It doesn't rewrite it. It issues delta operations - ADD, UPDATE, or REMOVE - against individual bullets in the structured playbook.

This is the mechanism that prevents context collapse. Rewriting the whole playbook every round is exactly how you generate the information loss that the paper is trying to solve. Each full rewrite introduces compression pressure; the model can't perfectly reconstruct what was there before. Over time, specificity erodes.

The incremental delta approach is the difference between git commit and "open the document and retype it from memory." The former gives you tracked, small, reversible changes with full history. The latter guarantees unbounded information loss at each step - and there's no diff to recover from.

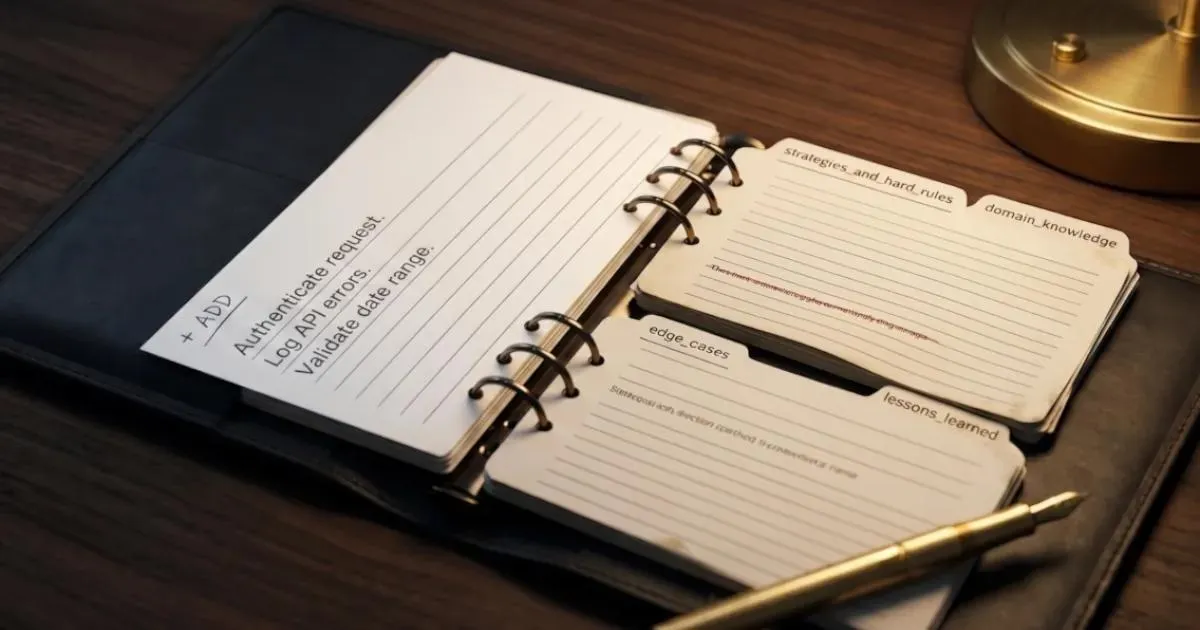

The playbook isn't a prose system prompt. It's a structured collection of bullets, each carrying:

strategies_and_hard_rules, domain_knowledge, edge_cases)This structure is what makes localized retrieval and surgical updates possible. Rather than injecting the entire accumulated playbook into every prompt, the system can retrieve only the bullets relevant to the current task. Specific beats generic, every time.

Here's a simplified representation of what a playbook bullet looks like, drawn from reference implementations:

{

"id": "rule_042",

"section": "strategies_and_hard_rules",

"content": "When the user specifies a date range, always validate that the end date is after the start date before querying the API. Return a structured error if not.",

"usage": {

"helpful": 14,

"harmful": 1

},

"added_at": "2025-11-03T09:14:22Z",

"last_updated": "2025-11-18T16:42:07Z"

}The usage counts matter. They're how the Curator knows whether to reinforce, update, or remove a bullet when the playbook grows large. Bullets that consistently contribute to failure are candidates for removal or revision. Bullets that prove reliable accumulate weight. The playbook becomes, over time, a compressed representation of what actually works in this specific domain.

The ACE paper reports:

That last point matters more than it sounds. Most learning-from-experience systems need labeled data to know what "better" looks like. ACE infers it from the agent's own execution trace.

If you know the optimization landscape, ACE will remind you of several things. Here's where it fits and where it doesn't:

ACE vs. Prompt Engineering

Prompt engineering produces a static artifact - you craft one good instruction set, and it doesn't change unless you change it manually. ACE is dynamic by design: the context evolves as the agent works. The insight the paper crystallizes is that a static prompt is a ceiling, not a foundation. Domain complexity accumulates faster than manual prompt iteration can track.

ACE vs. DSPy / GEPA

DSPy and GEPA are prompt optimization frameworks - they evolve the prompt instructions themselves, usually through gradient-based or few-shot methods. ACE evolves the context that lives behind the prompt - accumulated strategies, domain rules, hard-won heuristics. These aren't competitive approaches; a well-resourced team could conceivably run both. DSPy optimizes how the agent asks; ACE optimizes what the agent knows.

ACE vs. RAG / Vector Memory

RAG answers the question "what do I know?" - it retrieves relevant documents or facts for each query from an external knowledge base. ACE answers a different question: "what have I learned from doing this task before?" RAG gives the agent reference material. ACE gives the agent accumulated experience. Different problems. Both are worth solving.

Reflector quality is the bottleneck. The whole system depends on the Reflector's ability to extract meaningful insights from a reasoning trace. In specialized domains where even frontier models have limited capability, the Reflector produces weak or noisy lessons - and those lessons corrupt the playbook. As AltexSoft notes in their breakdown , this dependence on Reflector quality is the system's most significant single point of failure.

Error accumulation compounds. Bad reflections lead to bad Curator edits. Bad Curator edits persist in the playbook. Without robust evaluation loops catching drift, the playbook can degrade gradually - encoding the wrong lessons confidently. Garbage in, confidently curated garbage out.

Benchmark generalization is unproven. The paper validates on AppWorld (agentic tasks) and finance reasoning. Coding agents, medical reasoning, creative work, long-horizon planning - all untested. As Emergent Mind's breakdown of the paper flags, the leap from "works on these two benchmarks" to "generalizes broadly" hasn't been demonstrated yet. That doesn't mean it won't generalize - it means you'd be betting on an assumption the paper doesn't support.

Inference cost goes up. Three roles, a growing playbook, and per-task reflection loops cost more tokens per task than a static system prompt. The paper shows that adaptation cost is lower than monolithic-rewrite baselines - but it's still more expensive than doing nothing. For high-frequency, low-stakes tasks, the economics may not pencil out.

The gap between "this is an interesting research result" and "this changes how I work" is where most explainers stop. Here's where they shouldn't.

You probably don't need to implement a full Generator/Reflector/Curator loop for your production agent. Two reference implementations are available on GitHub - ace-agents and ACE-open - but both are research-grade and not production-hardened. Running them in production today means owning the maintenance yourself.

The principles, however, are immediately transferable:

Your CLAUDE.md or AGENTS.md file is already a primitive, human-maintained ACE playbook. You add project-specific rules, patterns to follow, and edge cases to watch for. ACE just proposes making the maintenance automatic rather than manual.

The practical application: when a coding session produces a hard-won lesson - a pattern that keeps breaking, an API quirk you learned the expensive way - add it as a discrete bullet to your agent config. Apply the ACE principles manually : one lesson, one bullet, one commit. Don't rewrite the whole file to incorporate it.

Over time, your CLAUDE.md accumulates the same kind of domain expertise ACE builds automatically. The difference is the feedback loop speed. The principle is identical.

The research-grade architecture isn't the point for you. The point is the insight underneath it: the payoff in working with AI is in reusing and refining your context, not rebuilding it each session.

Every time you reconstruct your project context from scratch - repasting the same background, re-explaining the same constraints, reminding the model of the same patterns - you're leaving performance on the table. The practical, available-today version of "evolving playbook" is a saved, reusable context stack you curate over time as you learn what works.

At HiveTrail, we build Mesh - a desktop tool that gives you the human-scale version of an evolving context playbook. Instead of automated delta updates, you save and refine your own Stacks: collections of Notion pages, local files, and prompt snippets that you curate over time. If you've been manually rebuilding context for every AI session, that's the workflow Mesh replaces. See how it works →

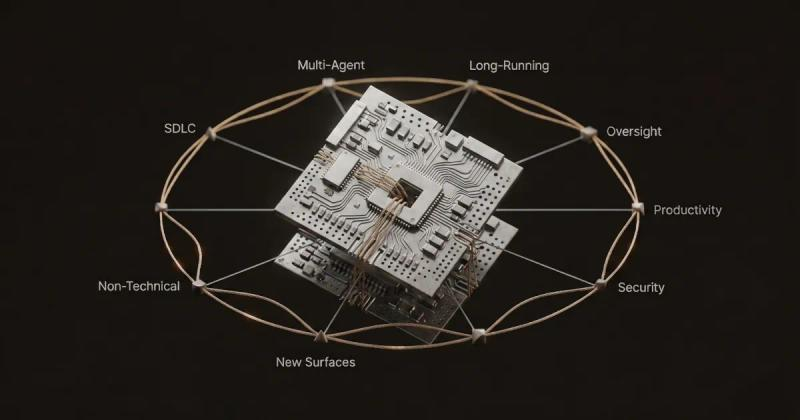

Anthropic's 2026 Agentic Coding Trends Report named context engineering the most important skill shift for developers this year. ACE is the first formal, measurable academic framework to validate why context isn't a static resource to be managed - it's a dynamic artifact to be engineered.

Other frameworks are coming. Dynamic Cheatsheet, DSPy, GEPA, and Anthropic's own writing on effective context engineering for agents all point in the same direction. The research consensus is converging on a shared principle: context is a first-class engineered artifact with its own versioning, curation, and lifecycle management.

Whether you implement ACE specifically is less important than internalizing what it proves. An agent that learns from its own execution trace will outperform one that doesn't. A context that accumulates structured lessons will outperform one that's rebuilt fresh each session. These aren't speculative claims anymore - they're benchmarked results from a peer-reviewed paper.

The question for 2026 isn't whether context engineering matters. It's whether your team treats it as seriously as the research now says it deserves.

The trend ACE validates - that context is an engineered artifact worth curating deliberately - is the entire reason we built Mesh. Join the beta →

The founder of HiveTrail , where he builds context management tools for LLM workflows and agentic AI. HiveTrail's flagship product, Mesh , is a desktop app in beta that helps developers and teams assemble reusable, curated context stacks from Notion, local files, and prompt libraries - the human-scale version of what ACE automates.

On this page

ACE is a framework proposed in a 2025 paper from Stanford, SambaNova, and UC Berkeley that treats an AI agent's context as an evolving playbook. Rather than relying on a static system prompt, ACE maintains a structured, growing document of strategies and lessons that the agent updates itself through a loop involving a Generator, Reflector, and Curator.

ACE targets two specific failure modes in AI agent design. Brevity bias describes how automated prompt optimization tends to favor short, generic instructions that lose domain-specific detail. Context collapse describes how iterative full rewrites of an agent's context gradually erode important information, the same way repeatedly retyping a document from memory introduces progressive information loss. ACE addresses both by accumulating context incrementally rather than compressing or rewriting it.

ACE runs a three-role loop on every task. The Generator attempts the task and produces a reasoning trace. The Reflector analyzes the trace and extracts lessons about what worked and what didn't. The Curator applies those lessons as small, targeted delta operations (ADD, UPDATE, REMOVE) to individual bullets in a structured playbook. Over time, the playbook accumulates genuine domain expertise without collapsing under the weight of its own rewrites.

Prompt engineering produces a static instruction set you craft once and maintain manually. RAG retrieves reference documents at query time to give the model relevant information. ACE evolves the context itself, specifically, the accumulated strategies and lessons an agent has learned from past task attempts. The three approaches address different problems and are complementary rather than competitive.

Most production teams don't need a full ACE implementation today. The available open-source implementations are research-grade and not production-hardened. The more immediately applicable takeaway is to apply the underlying principles manually: structure your agent's context as discrete, tagged bullets rather than prose, prefer incremental additions over wholesale rewrites, and version your context over time the same way you version source code.

Claude Code sessions degrade silently - not from bugs, but from context rot. Here's the science, the symptoms to spot early, and the fix that works upstream.

Read more about Claude Code Context Window Rot: Why Sessions Get Dumber (And How to Fix It)

A practitioner's read on Anthropic's 2026 Agentic Coding Trends Report - the eight trends, the context problem underneath them, and what to do about it.

Read more about We Read Anthropic's 2026 Agentic Coding Trends Report. Here's What It Actually Means for Engineering Teams.

Stop dumping raw files into your LLM. Learn how to build a structured LLM context stack covering source selection, token budgeting, privacy, and XML assembly.

Read more about How to Build an LLM Context Stack: A Practical Playbook for Developers (2026)